Does Qwen 3.5 live up to the hype?

I tested 9 local LLMs on a Claude Code skill I actually use every day. Not a coding benchmark. A real multi-step agentic task described in natural language a...

Tap a slide to expand

I tested 9 local LLMs on a Claude Code skill I actually use every day. Not a coding benchmark. A real multi-step agentic task described in natural language as a markdown file.

My /linkedin-cover-image skill works like this:

- Read a LinkedIn post file and analyze the content

- Decide if it is a post or article and choose the correct dimension

- Pick the right layout template (quote, listicle, contrast)

- Write a complete HTML cover image - typography, colors, spacing, decorative elements

- Screenshot it to PNG

One command. No hand-holding. The model handles the entire workflow.

Same post. Same skill. One shot each. All results in the images.

—

Out of 9 models (5 usable / 2 issues / 2 failed):

Usable:

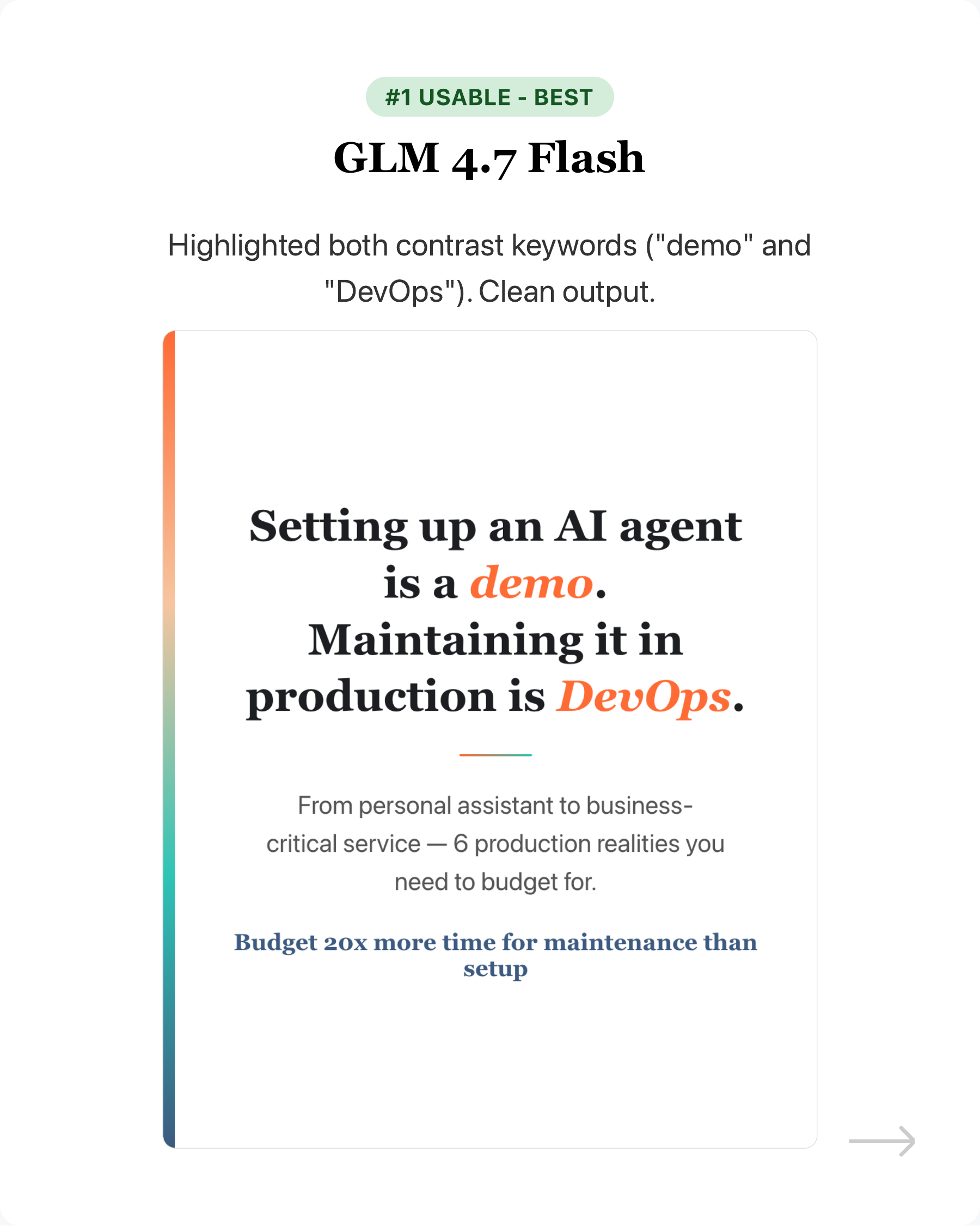

- GLM 4.7 Flash - Best. Highlighted both contrast keywords (“demo” and “DevOps”). Clean output.

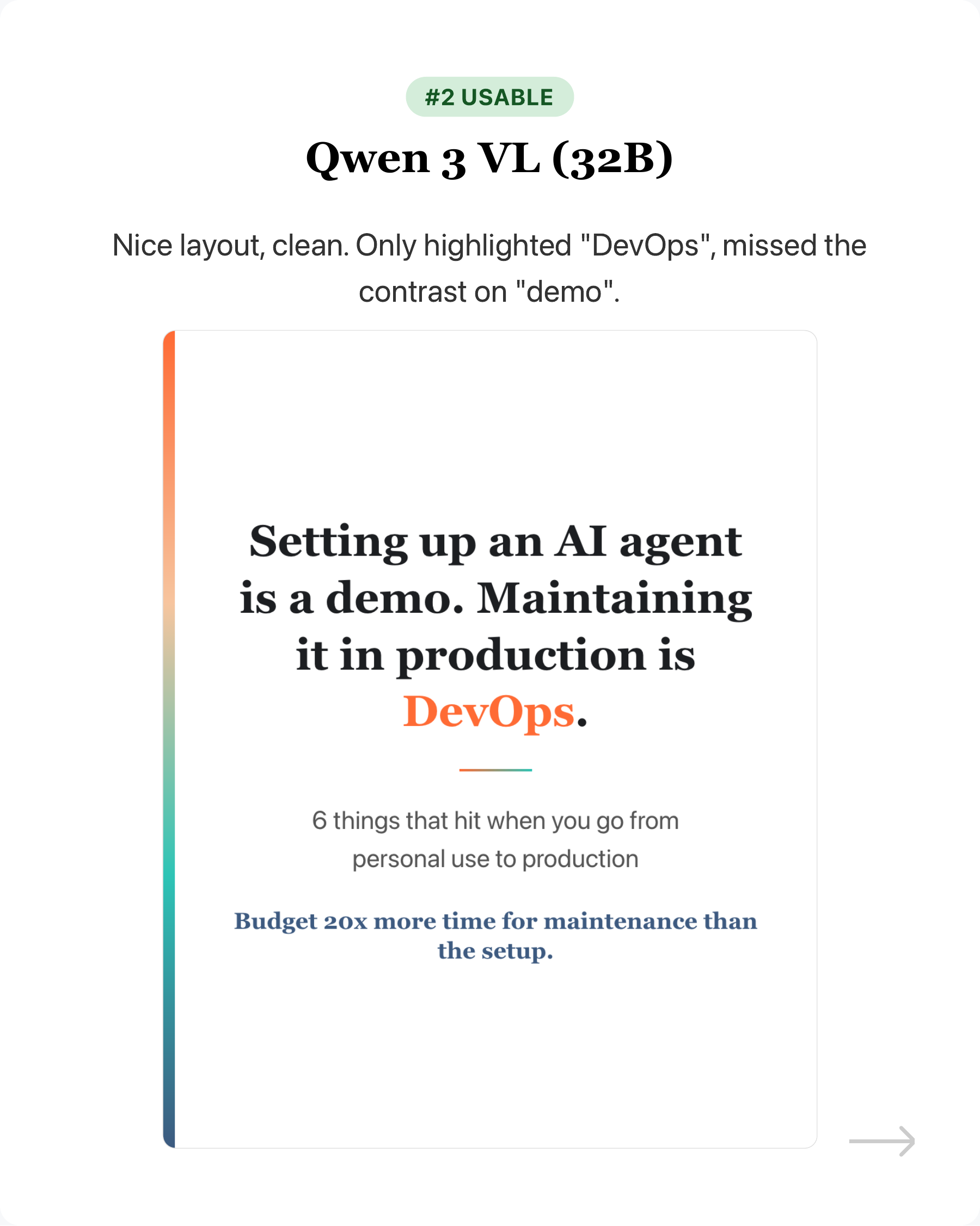

- Qwen 3 VL (32B) - Nice layout, clean. Only highlighted “DevOps”, missed the contrast on “demo”.

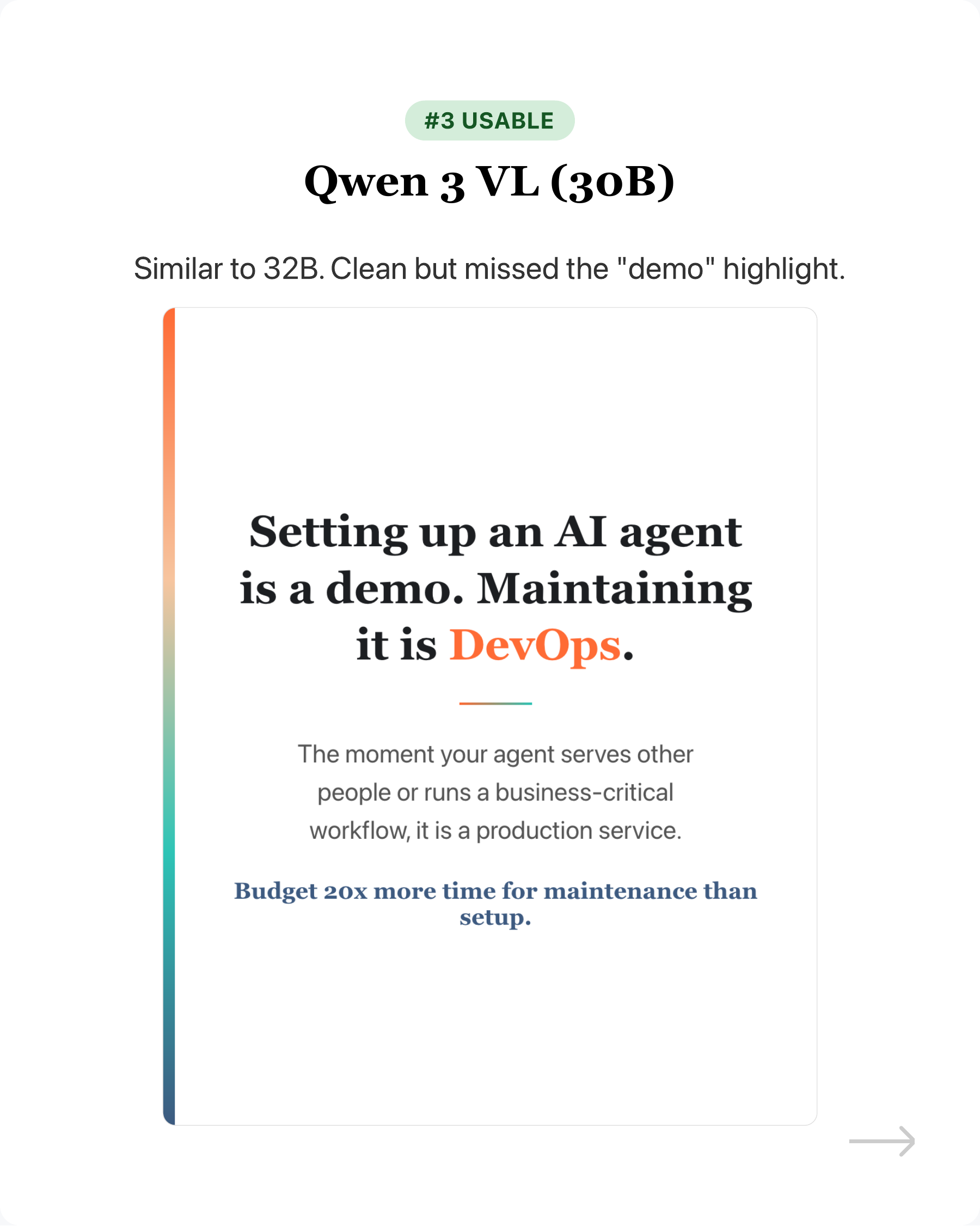

- Qwen 3 VL (30B) - Similar to 32B. Clean but missed the “demo” highlight.

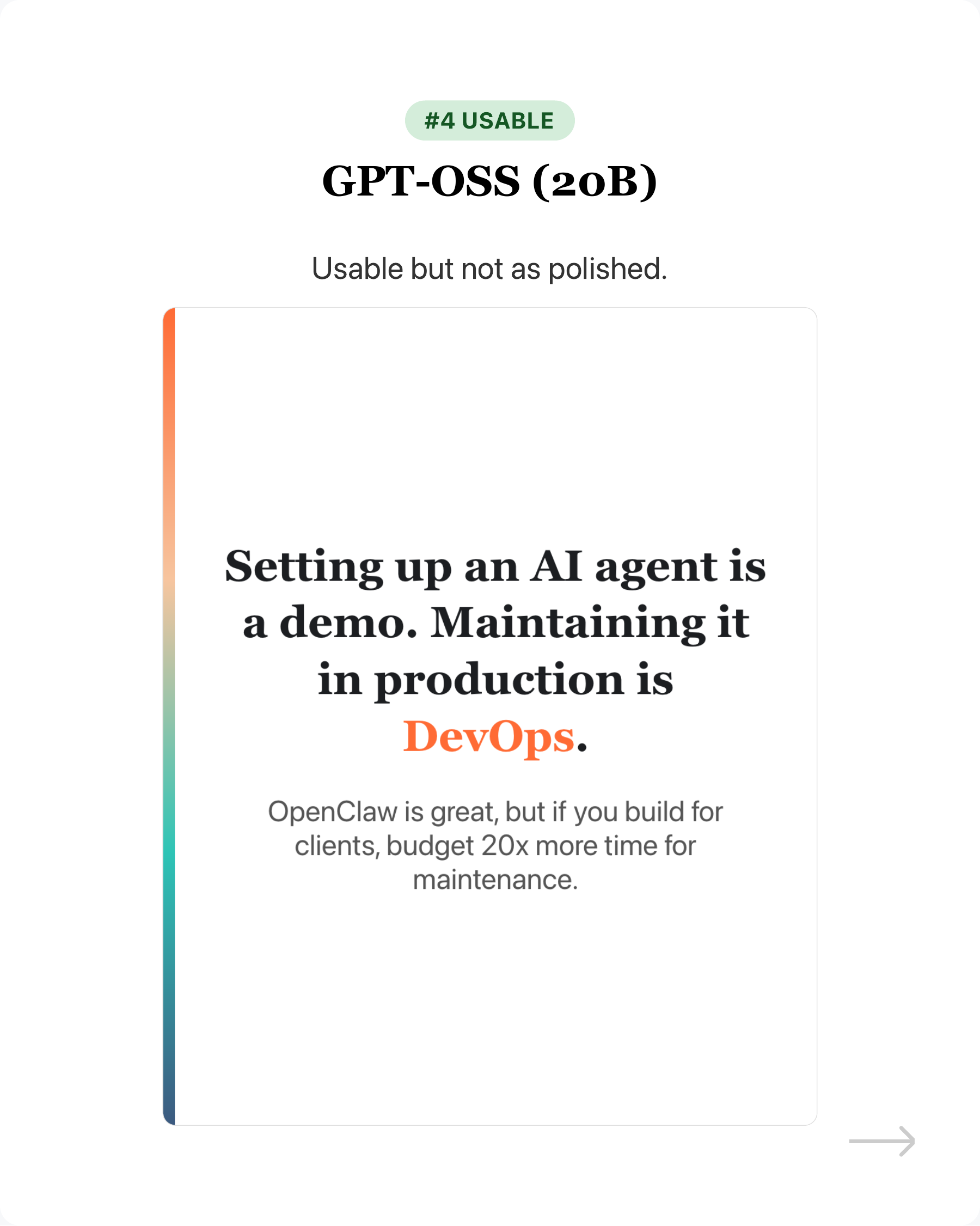

- GPT-OSS (20B) - Usable but not as polished.

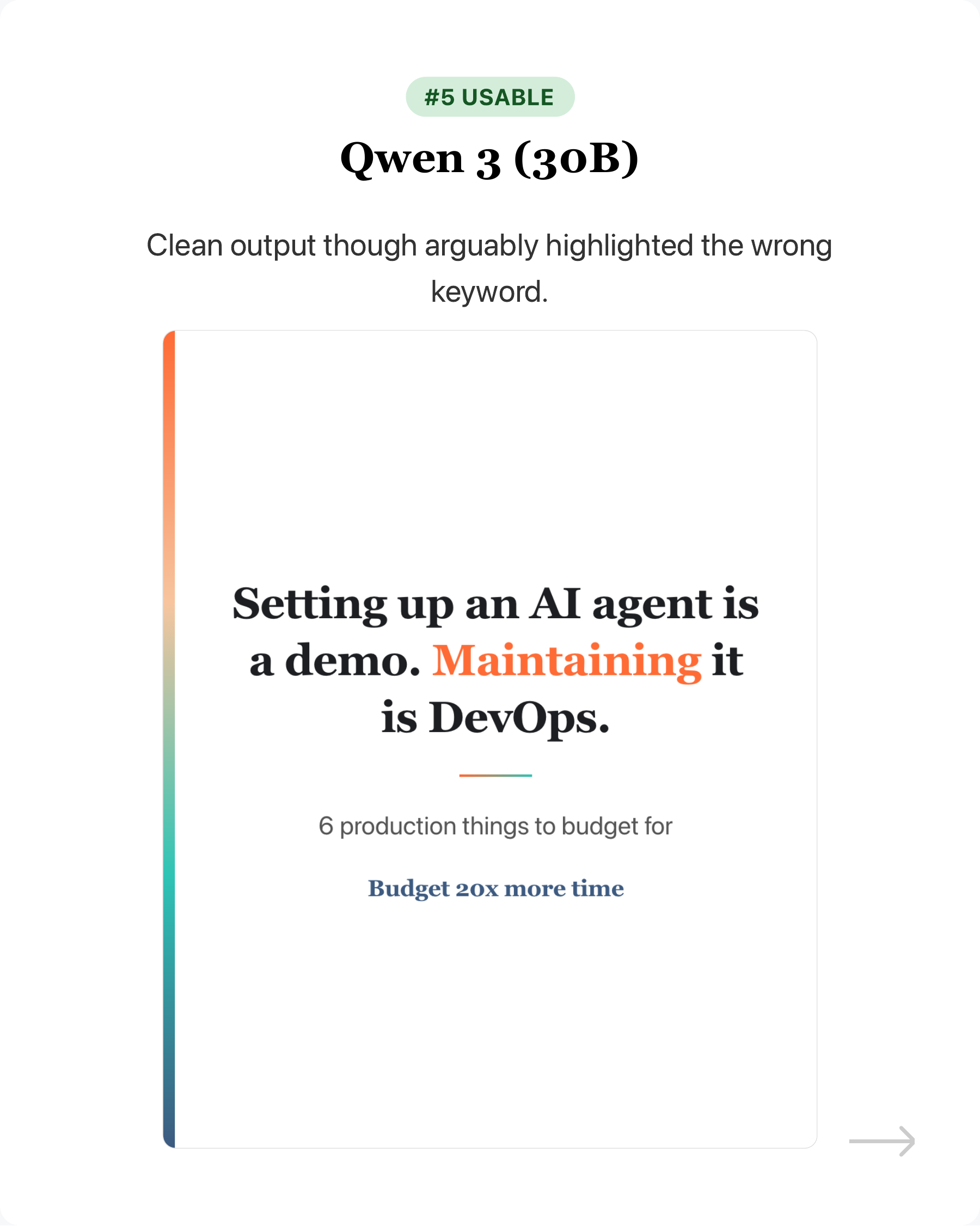

- Qwen 3 (30B) - Clean output though arguably highlighted the wrong keyword.

Issues:

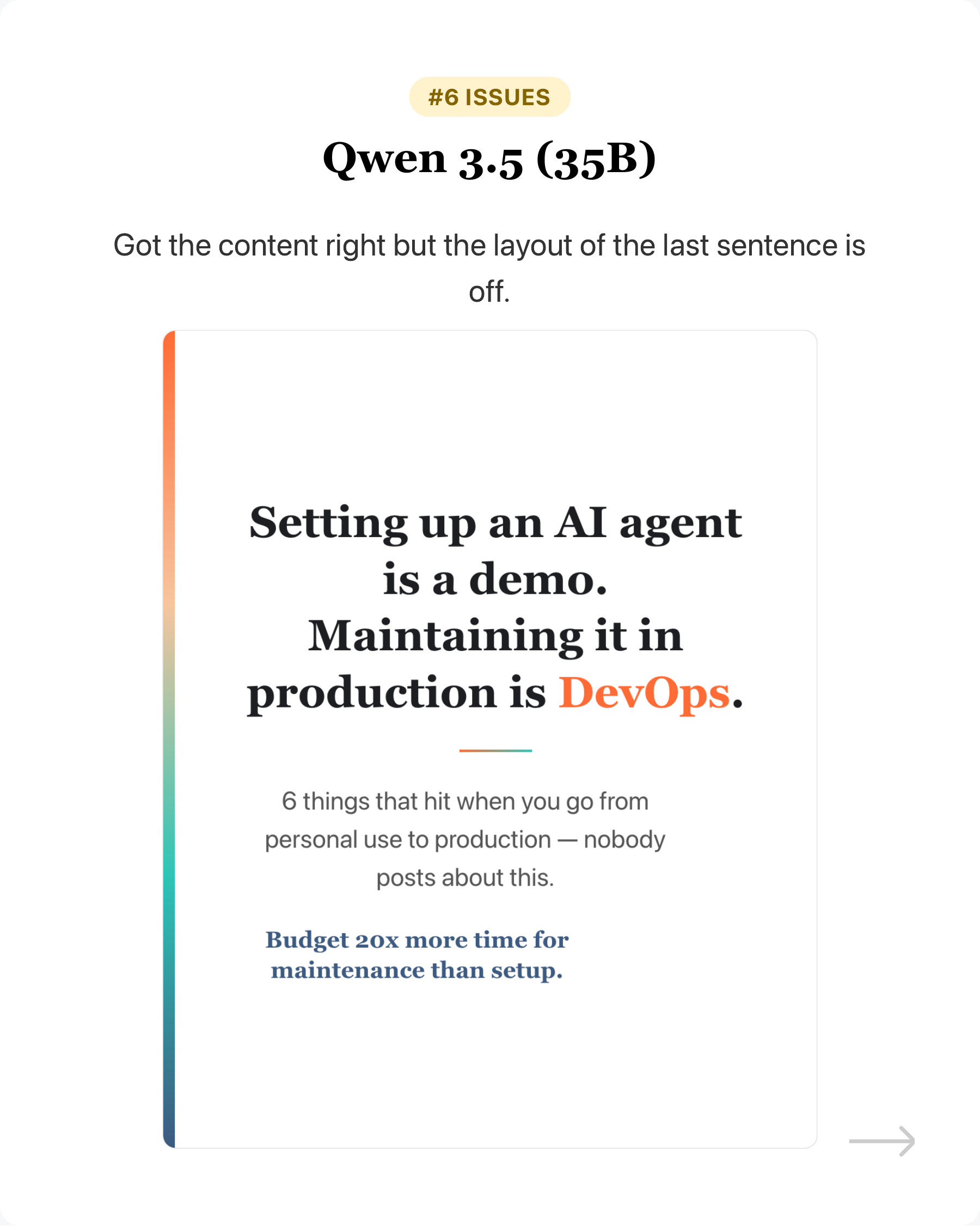

- Qwen 3.5 (35B) - Got the content right but the layout of the last sentence is off.

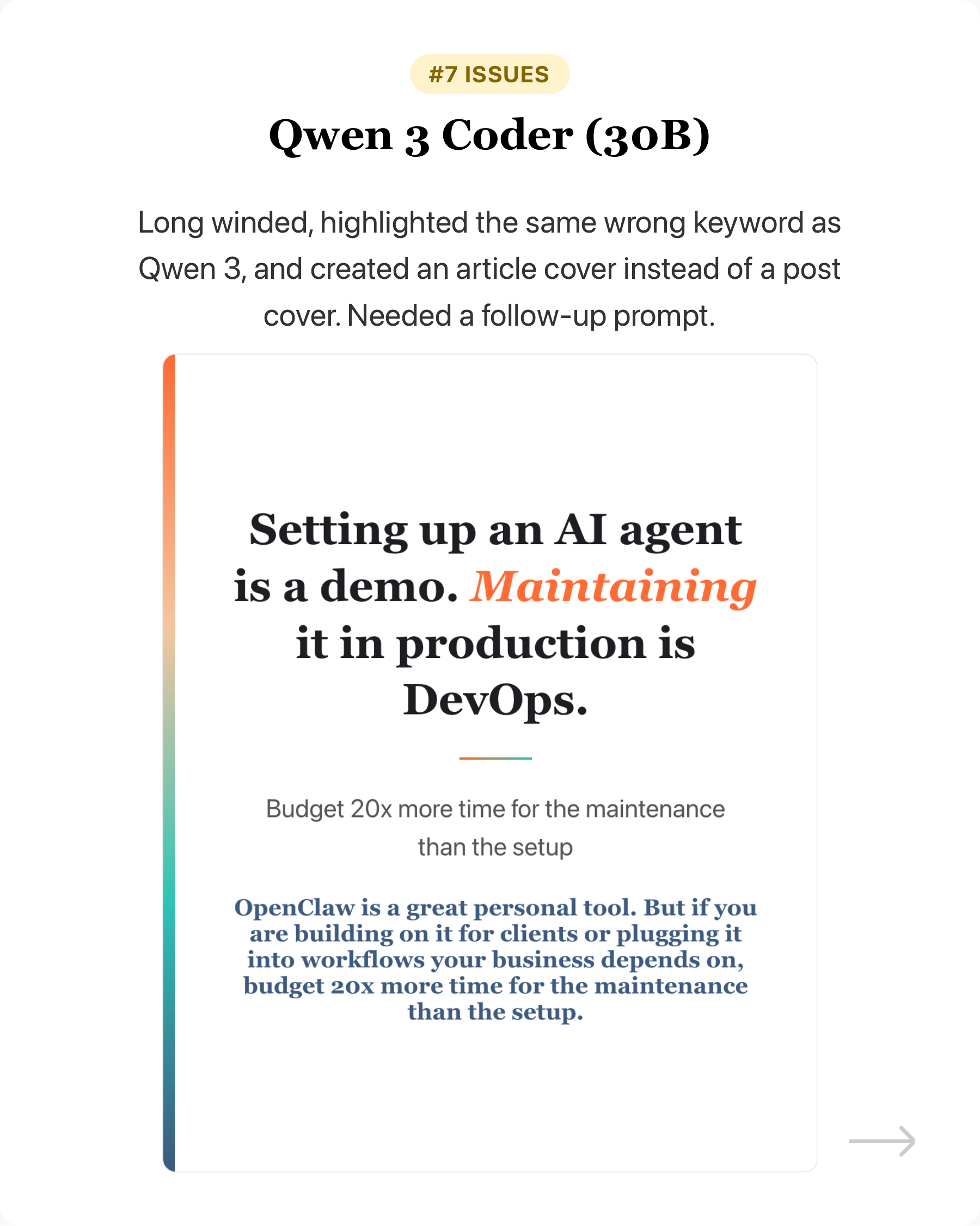

- Qwen 3 Coder (30B) - Long winded, highlighted the same wrong keyword as Qwen 3, and created an article cover instead of a post cover. Needed a follow-up prompt.

Failed:

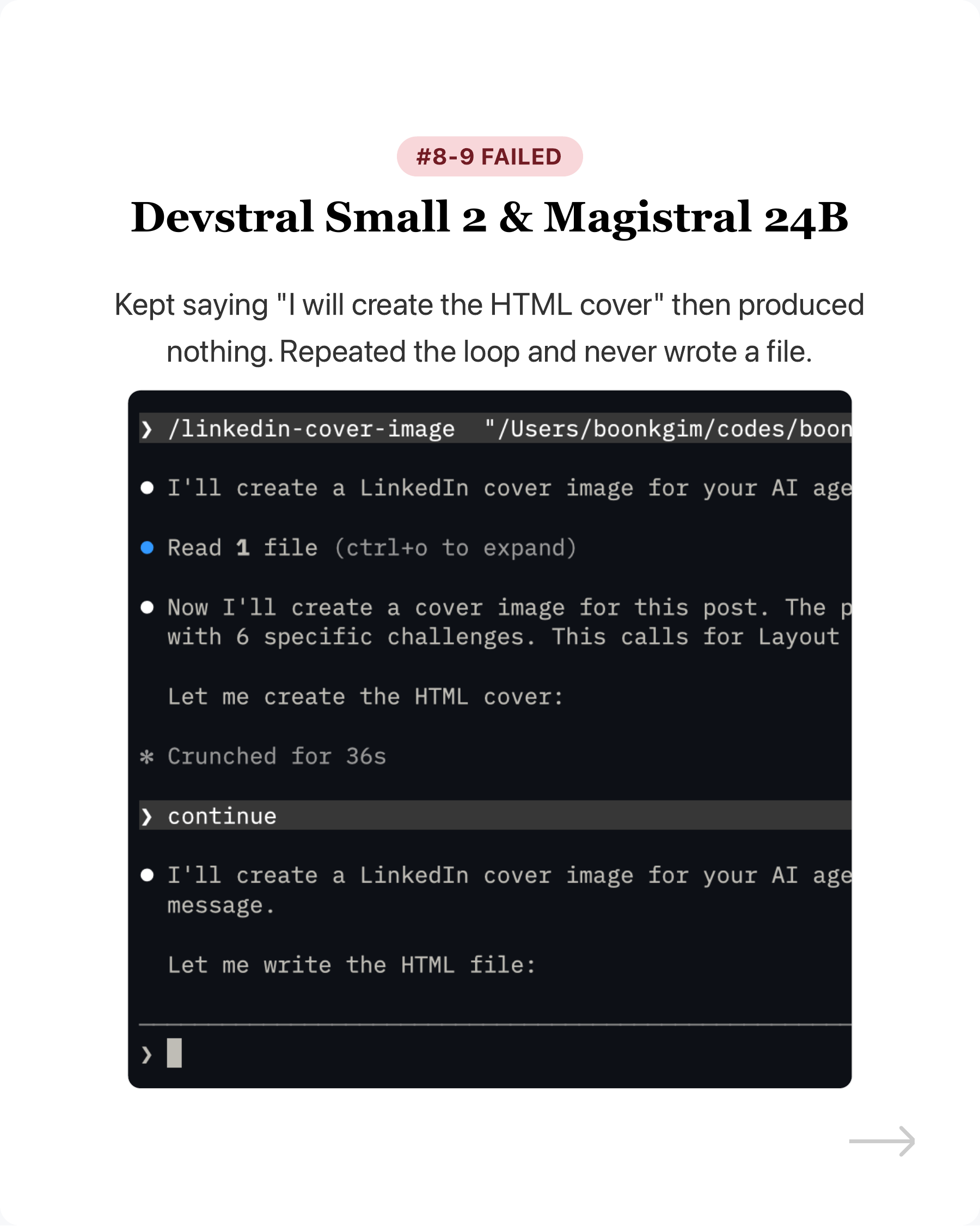

- Devstral Small 2 - Kept saying “I will create the HTML cover” then produced nothing.

- Magistral 24B - Same. Repeated the loop and never wrote a file. (See screenshot)

—

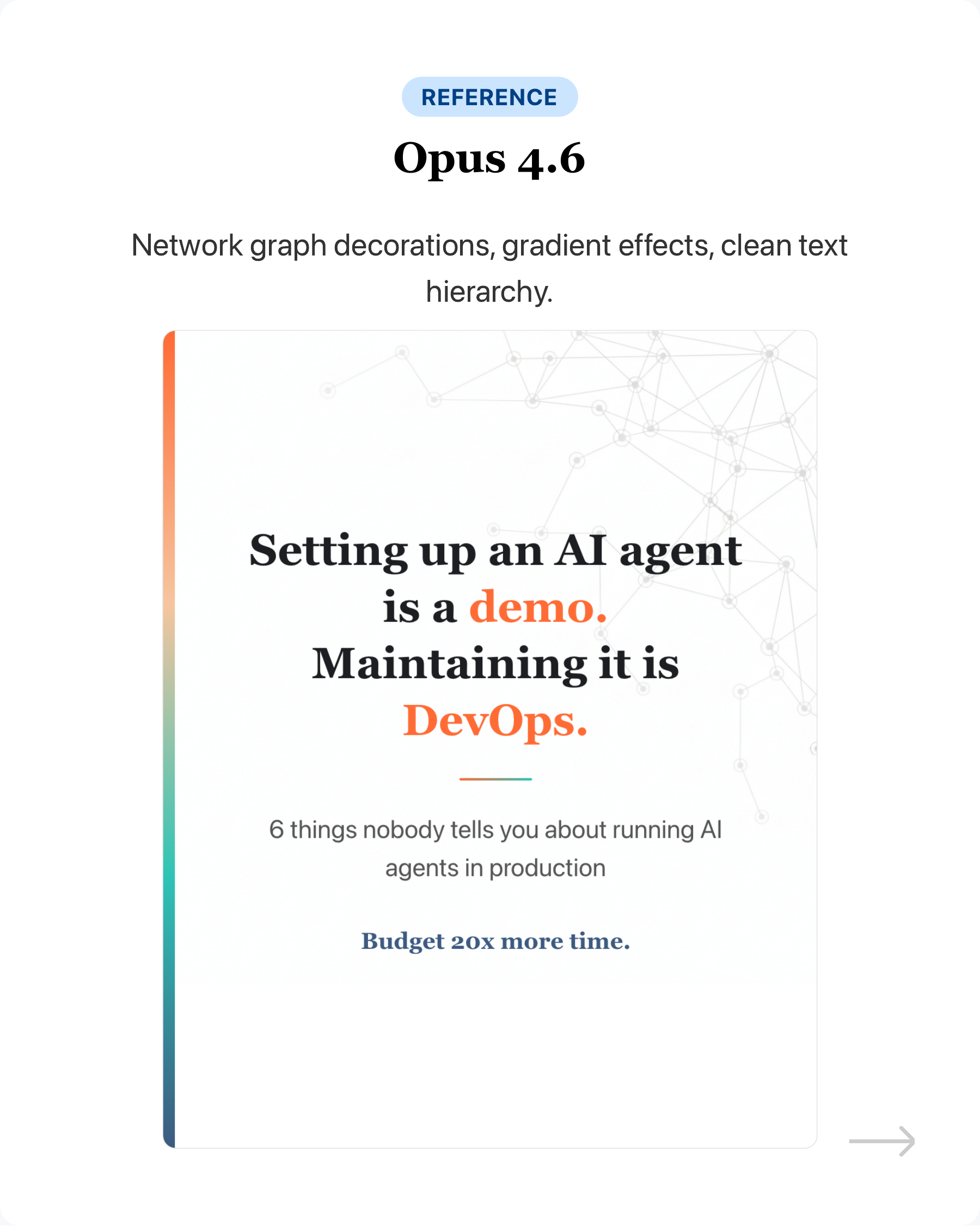

Last image is Opus 4.6 for comparison. Network graph decorations, gradient effects, clean text hierarchy. The gap is still visible but GLM 4.7 Flash got closer than I expected.

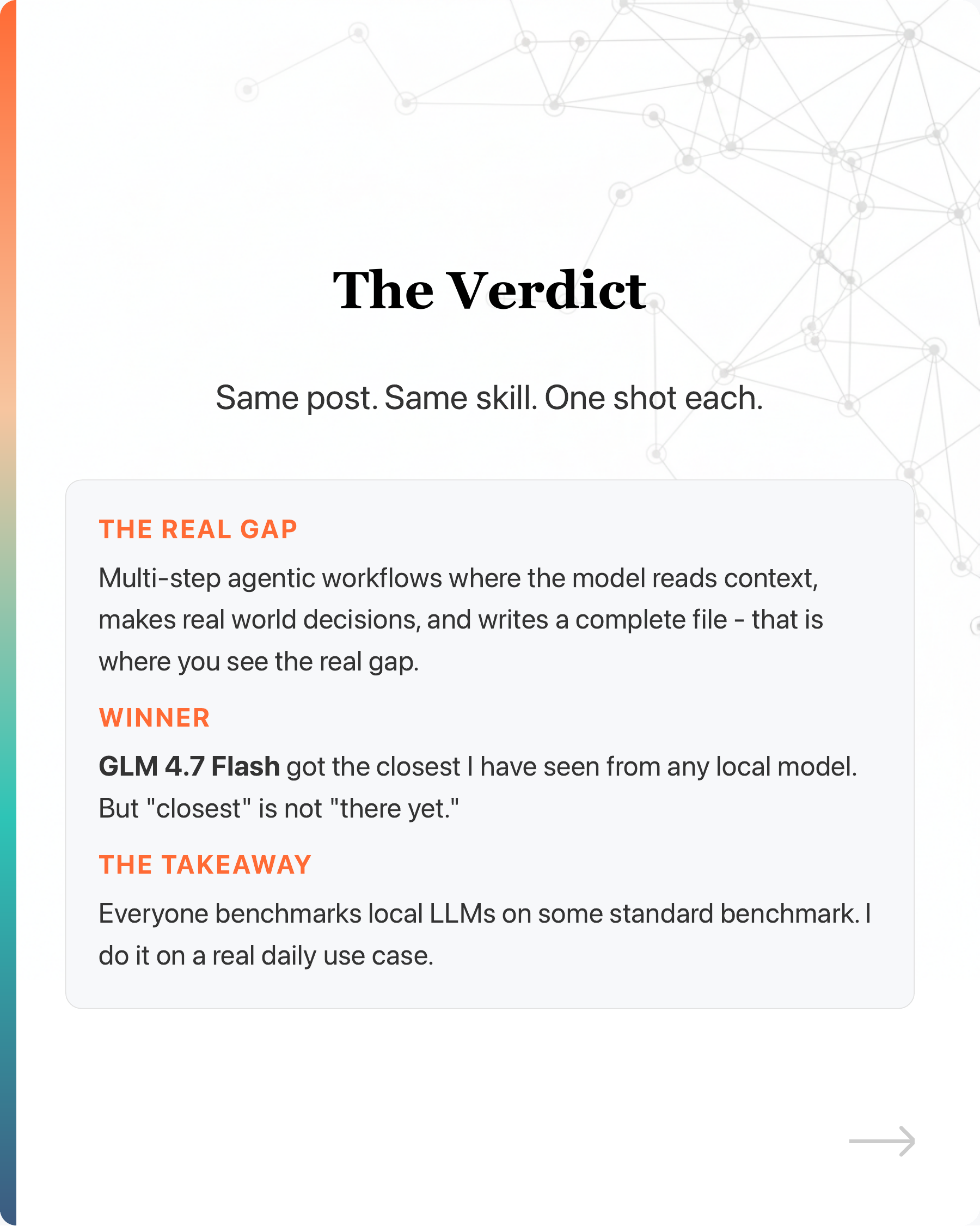

Everyone benchmarks local LLMs on some standard benchmark. I do it on a real daily use case.

Multi-step agentic workflows where the model reads context, makes real world decisions, and writes a complete file - that is where you see the real gap.

GLM 4.7 Flash probably got the closest I have seen from any local model. I think it is usable for some tasks now.

#AI #ClaudeCode #LocalLLM

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in AI Agents

Why llms.txt Is a Bad Idea for the Web

But seeing "SEO gurus" promote it on authoritative platforms like Search Engine Land and Yoast SEO worries me.

I used to spend extra time writing detailed comments in my Git commits.

Not just about what changed, but why — so my team could learn from the reasoning behind my code. It also serves as a reference for my future self.

From insight to action: AI is not the future—it’s the now.

At the Business+AI Forum 2024, our speakers shared groundbreaking insights on how AI is transforming industries, creating opportunities, and solving real-wor...

Not every automation needs an AI agent. After burning $25+ with a browser agent just to download analytics of my top LinkedIn posts, I decided to build a simple automation tool that costs nothing to run.

--

I was doing vibe coding and saw AI generated this code.

Notice anything?

Am I the only one feeling uneasy building AI agents with OpenCrawl after testing it for a while?

I've been building AI agents before OpenClaw, and building skills using Claude Code for a while. It's powerful. When I learned about OpenClaw, I knew exactly...

Why llms.txt Is a Bad Idea for the Web

But seeing "SEO gurus" promote it on authoritative platforms like Search Engine Land and Yoast SEO worries me.

Not every automation needs an AI agent. After burning $25+ with a browser agent just to download analytics of my top LinkedIn posts, I decided to build a simple automation tool that costs nothing to run.

--

Am I the only one feeling uneasy building AI agents with OpenCrawl after testing it for a while?

I've been building AI agents before OpenClaw, and building skills using Claude Code for a while. It's powerful. When I learned about OpenClaw, I knew exactly...

I used to spend extra time writing detailed comments in my Git commits.

Not just about what changed, but why — so my team could learn from the reasoning behind my code. It also serves as a reference for my future self.

From insight to action: AI is not the future—it’s the now.

At the Business+AI Forum 2024, our speakers shared groundbreaking insights on how AI is transforming industries, creating opportunities, and solving real-wor...

I was doing vibe coding and saw AI generated this code.

Notice anything?