Don't believe the BS that you can use Claude Code for free.

Ollama recently made their API compatible with Claude Code. Many creators quickly jumped on the opportunity to farm engagement with the hook: "You can now u...

Tap a slide to expand

Ollama recently made their API compatible with Claude Code. Many creators quickly jumped on the opportunity to farm engagement with the hook: “You can now use Claude Code for free!”

My thought? Claude Code without Opus 4.5 is not Claude Code. Period.

But this is exciting news. Not because I can use Claude Code for free, but because I see an opportunity to optimize costs by delegating easier tasks to local LLMs.

The key question is: what tasks can local LLMs handle? I tested out 7 local LLMs.

In this post, I will explain the BS and share my 1st experiment.

—

Why the BS?

Claude Code has been praised as one of the best AI tools by its users, not only for coding but for many other tasks.

But the price feels steep to many. The $20/month plan is not enough for any serious work. You need to at least subscribe to Max 5x ($100/month). Many heavy users, including me, subscribe to Max 20x ($200/month). It’s a steal. But still, many were eager to try it but aren’t ready to pay.

Ollama’s recent announcement means you can buy a Mac, a Strix Halo, or a GPU and use Claude Code for “free” with local LLMs.

It’s appealing, as it is a one-time investment, and you can use the machine for other purposes. Creators are leveraging this opportunity to farm engagement.

But the reality? Claude Code without Opus 4.5 is not the same Claude Code we praised. Local LLMs are far less intelligent.

—

But for those who understand the difference, we see an opportunity to optimize costs by delegating some easier tasks to local LLMs.

I’m interested in finding out what tasks local LLMs can handle.

This is my typical flow when using Claude Code. This is for coding, but I have similar flows for marketing and content creation.

- Research and planning

- Create PRD and implementation plan

- Break plan into bite-sized tasks

- Implement + review with reflection pattern

- Final review with agents

- Final review and QA by me

Based on my quick tests, we can forget about asking local LLMs to do research and planning. All of them failed at a simple instruction: “Visit https://learnparrot.ai/ and tell me about the website.”

So, I think the most viable use cases would only be (4) — implementation + review loops.

While it looks like a very narrow use case, it is where we burn a lot of tokens. So I think it is worth a try.

The main selection criteria for this will be instruction-following capability.

One very common task is to refer to code samples or templates to code a new feature or page. This is a good test of instruction-following.

So, I crafted my first test:

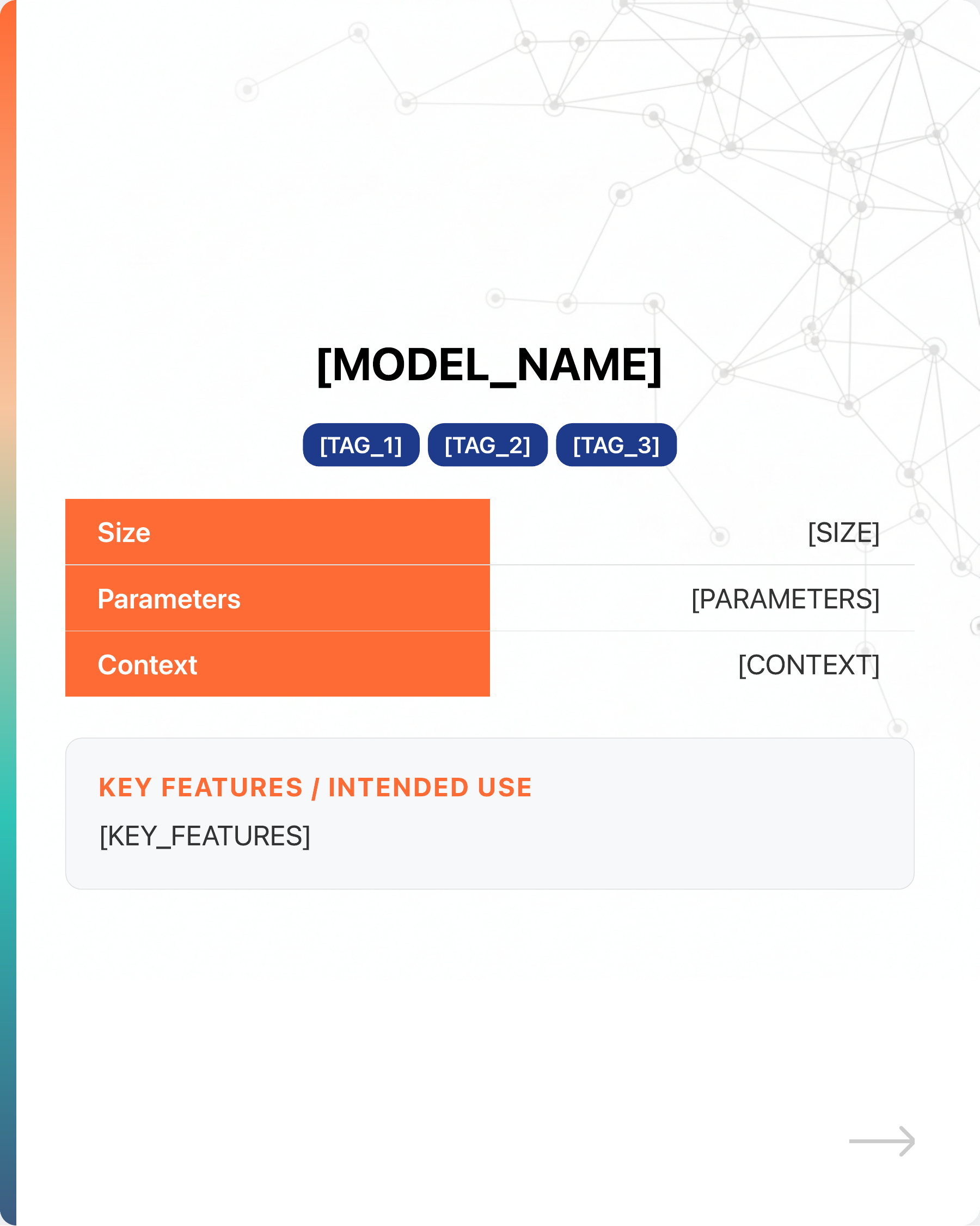

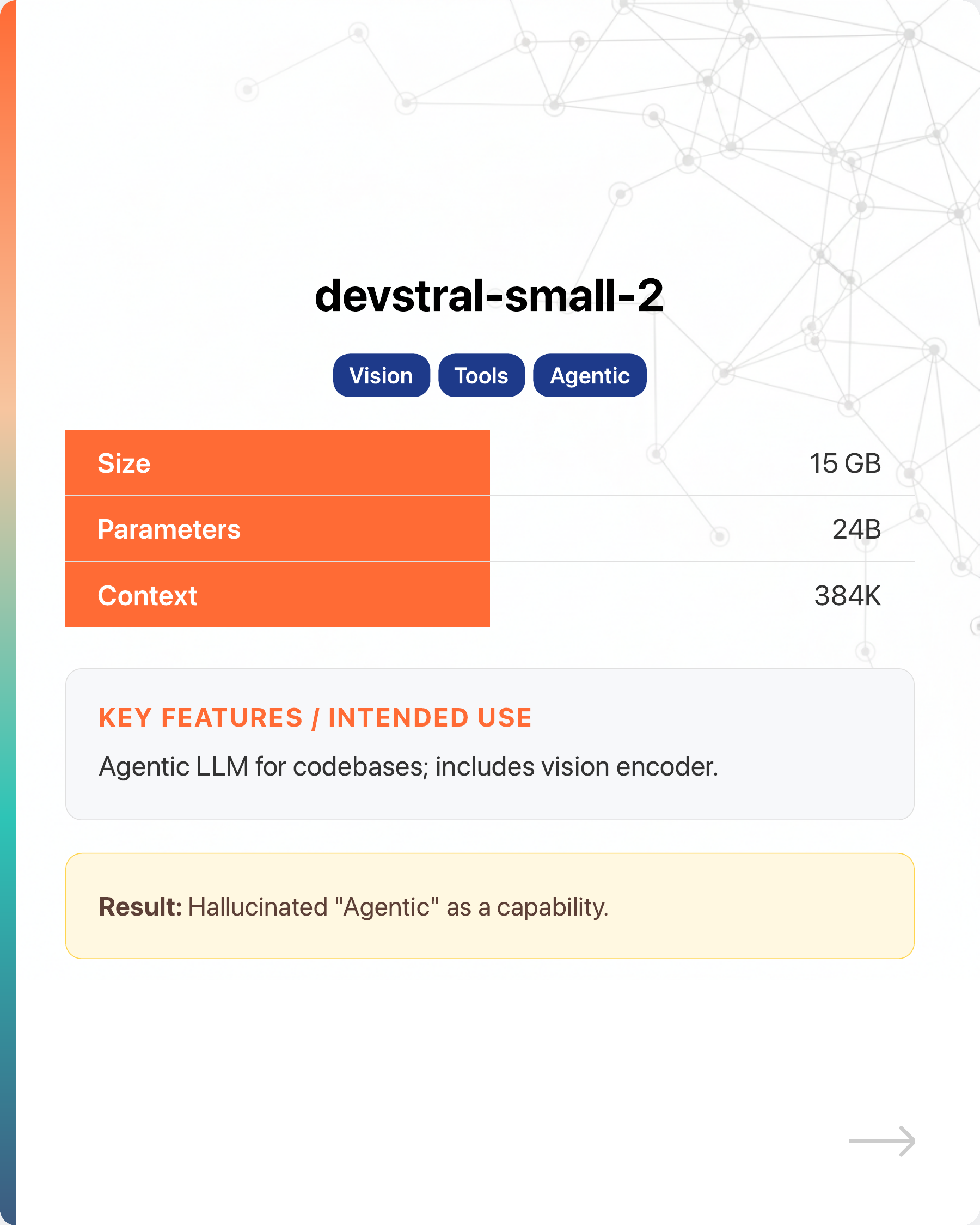

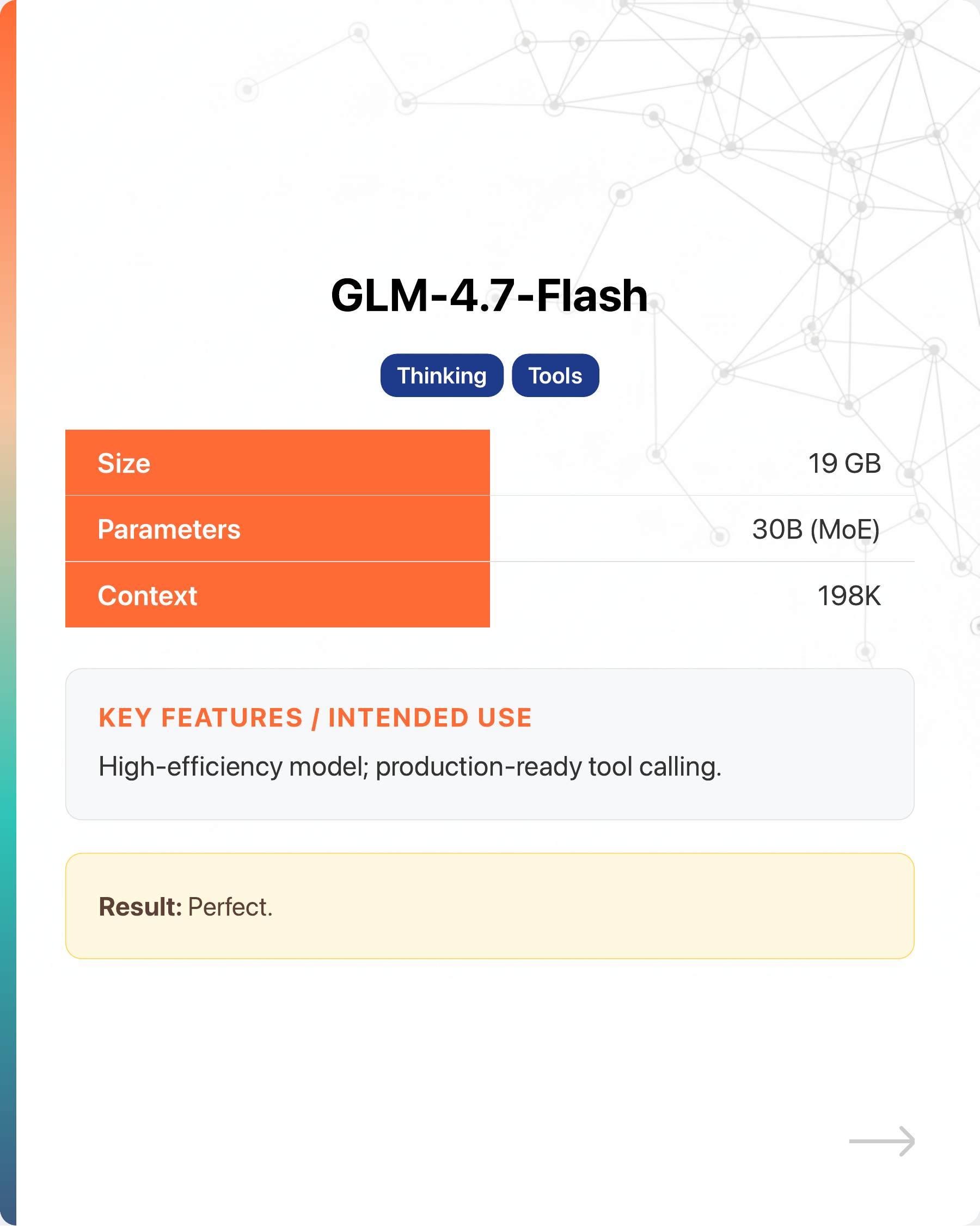

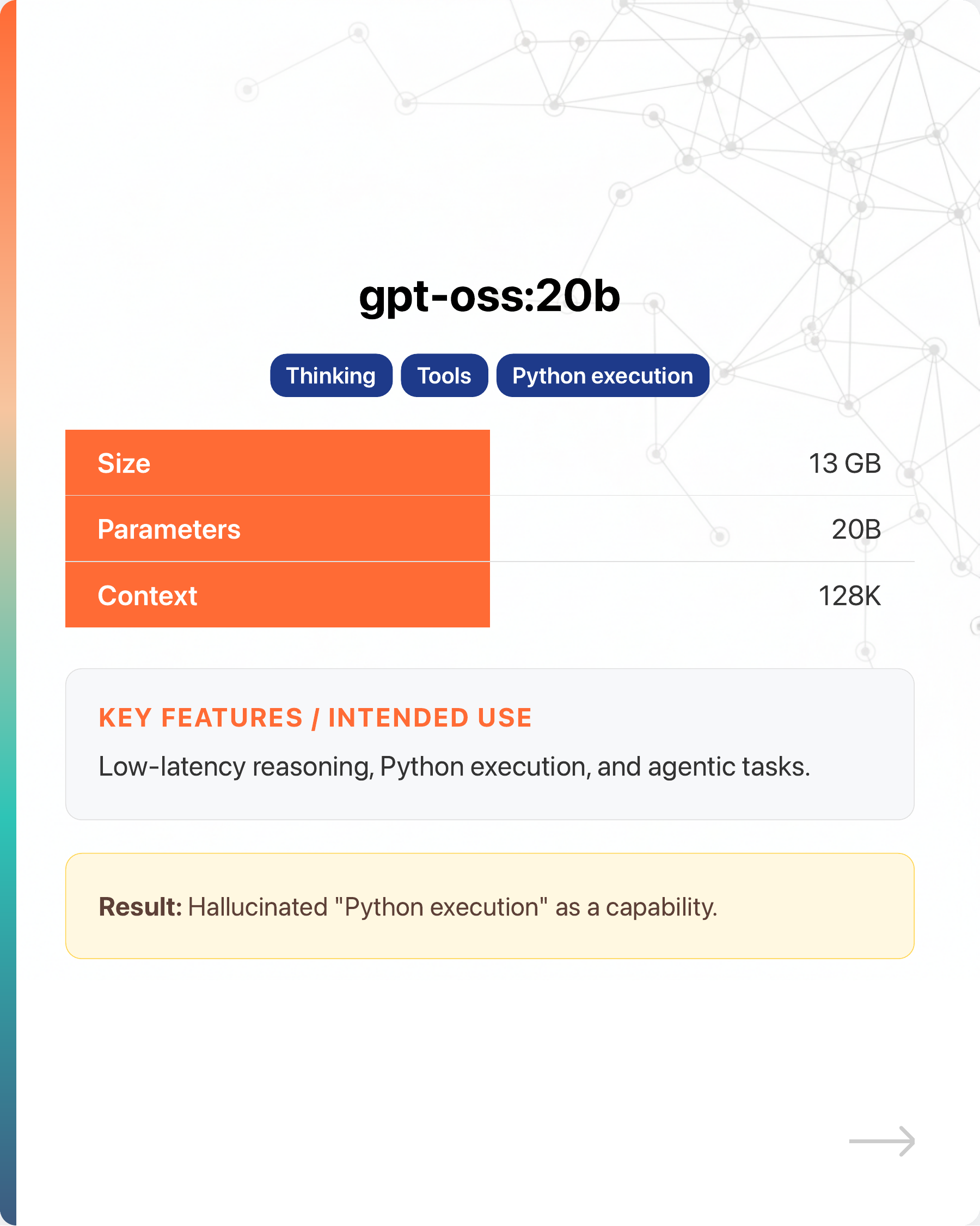

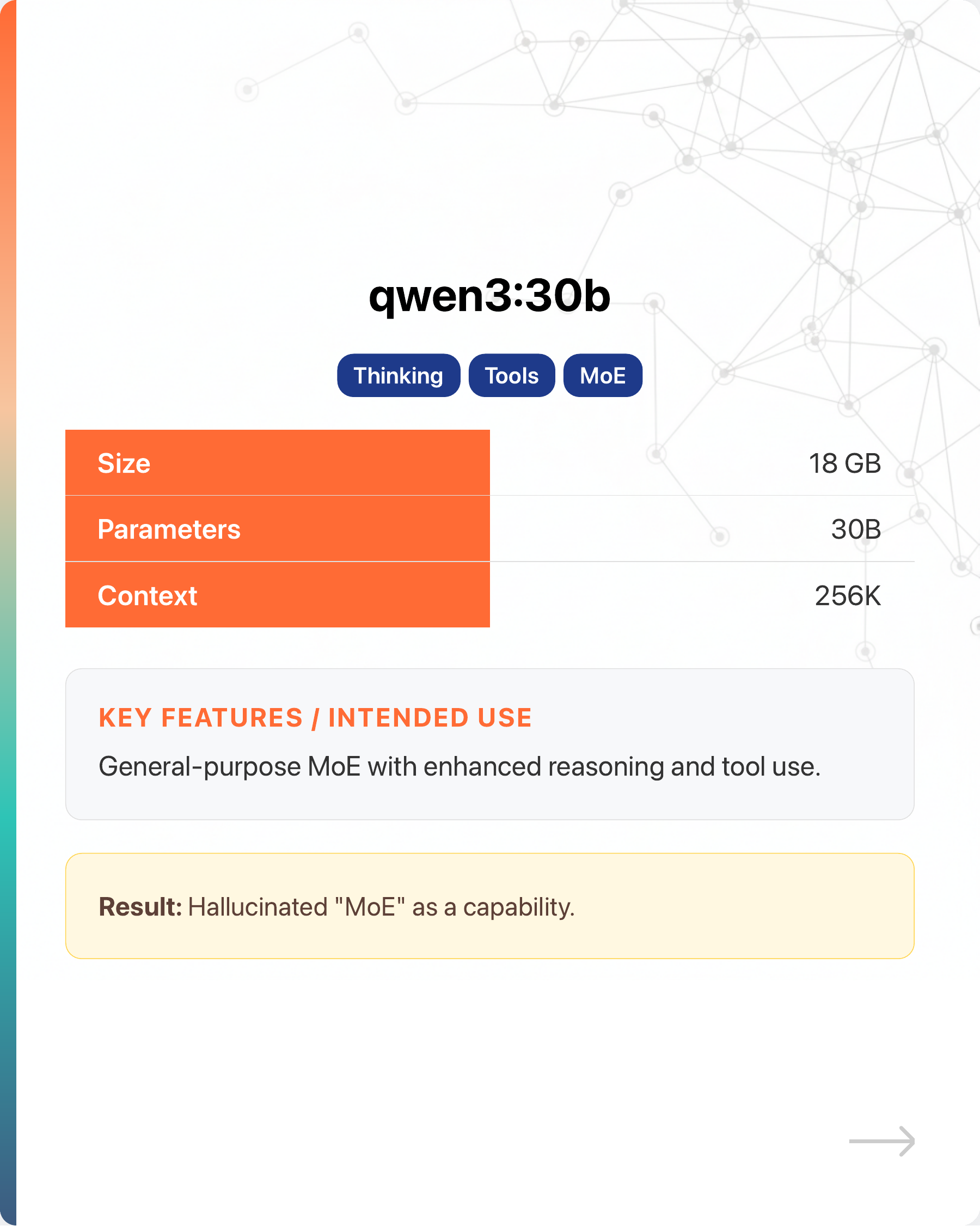

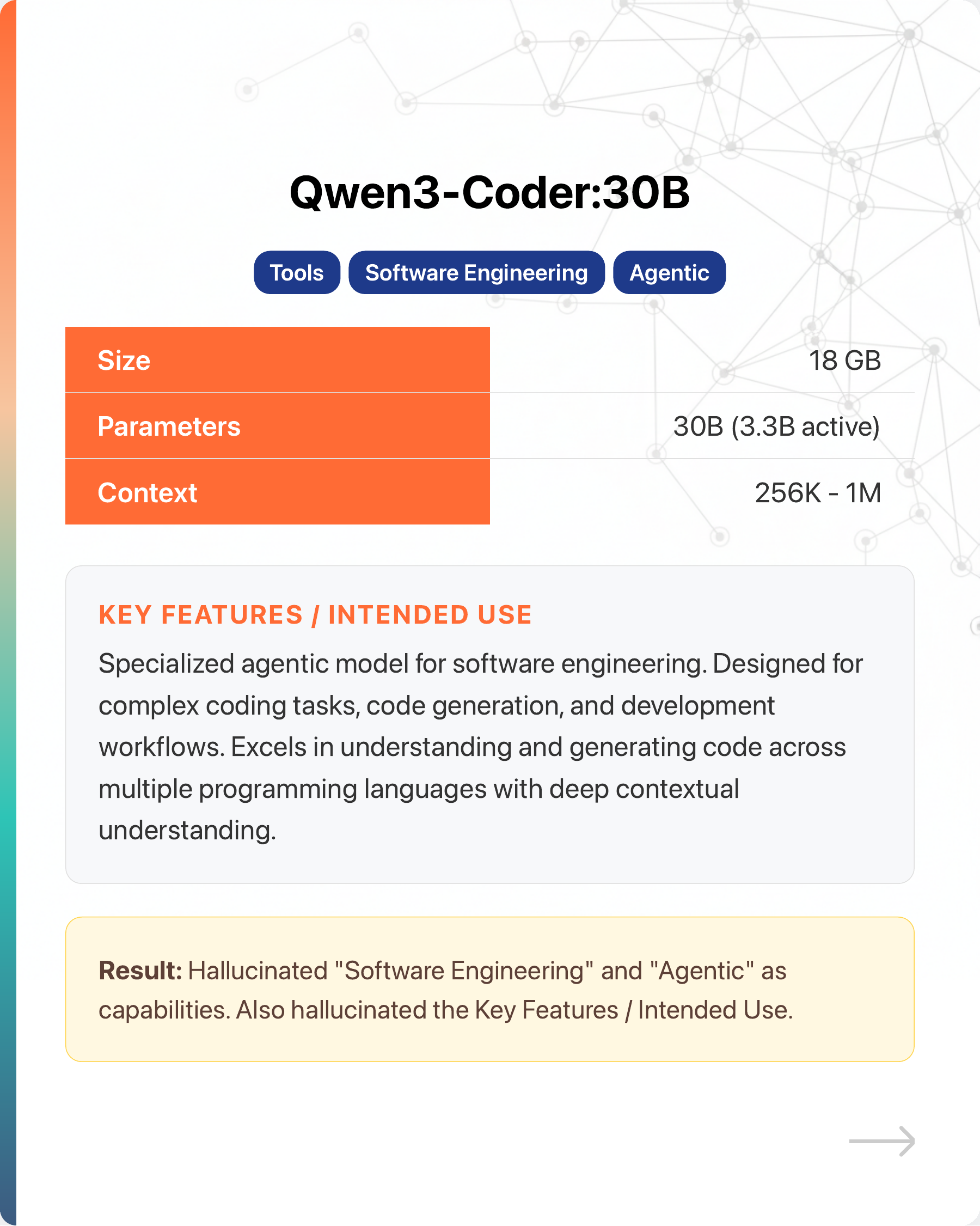

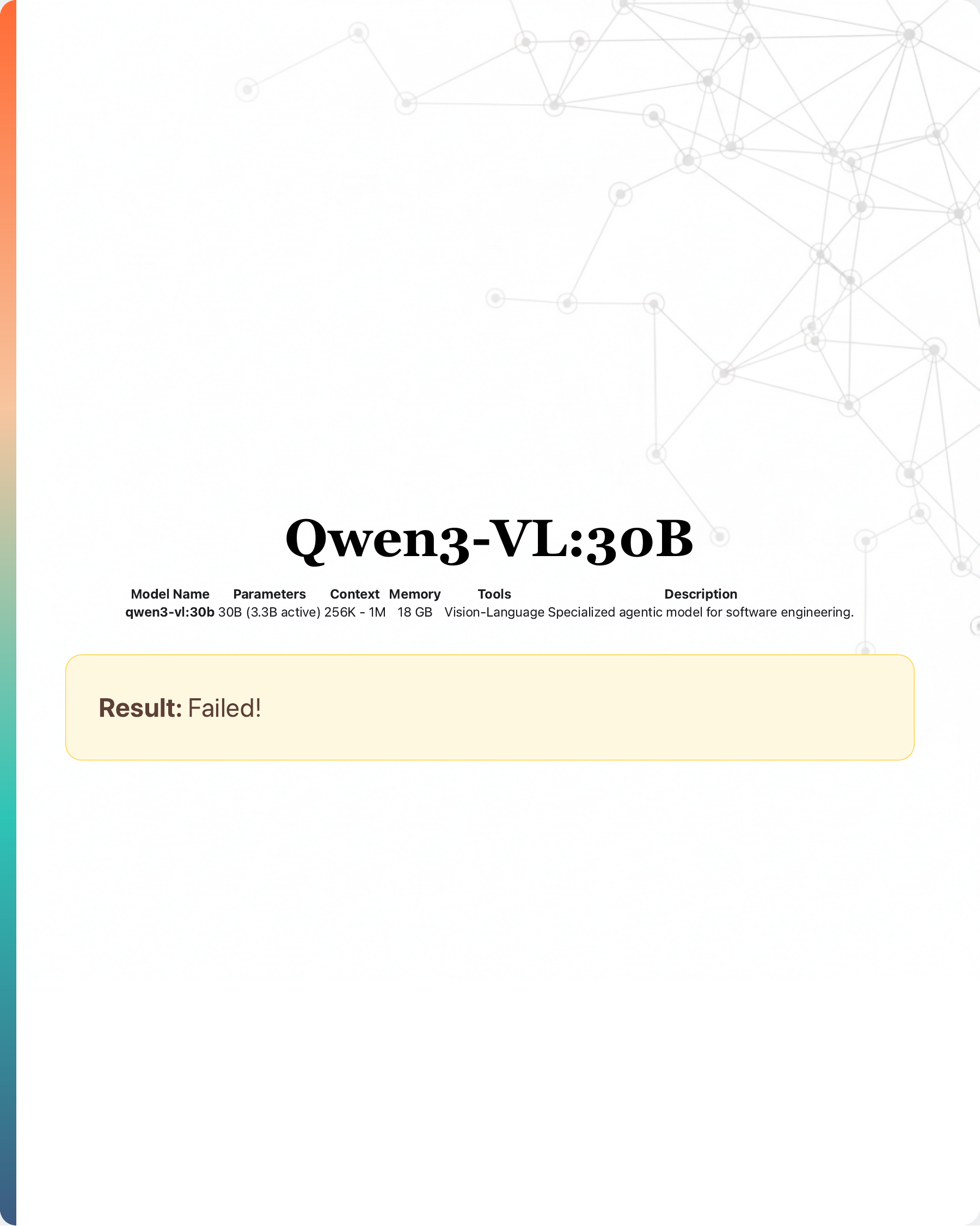

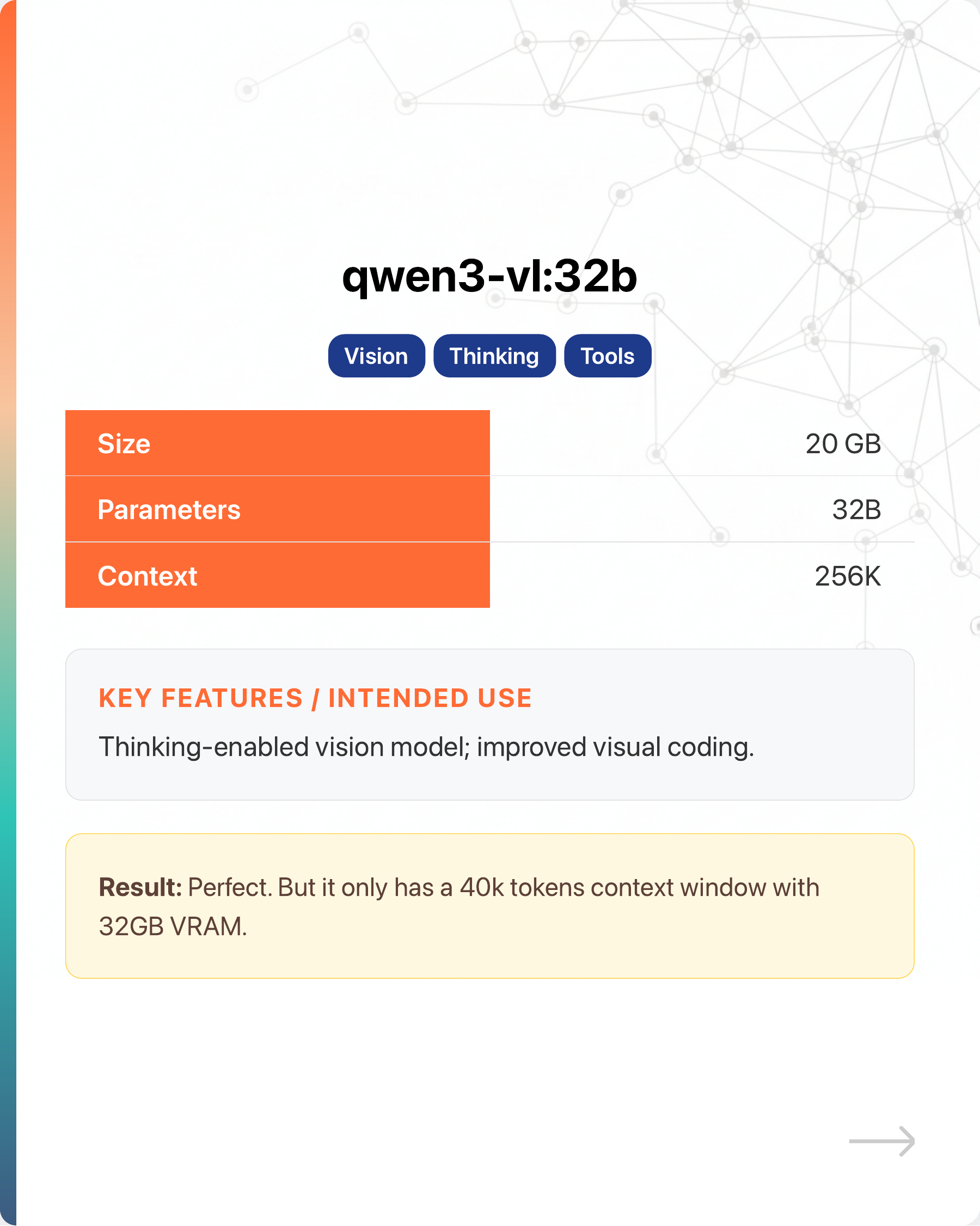

- Used Opus to create HTML that I can screenshot as a LinkedIn carousel to display info for each model.

- Turned one of the pages into a template and deleted the rest.

- Asked each LLM to refer to the template to code its own page, given its specs.

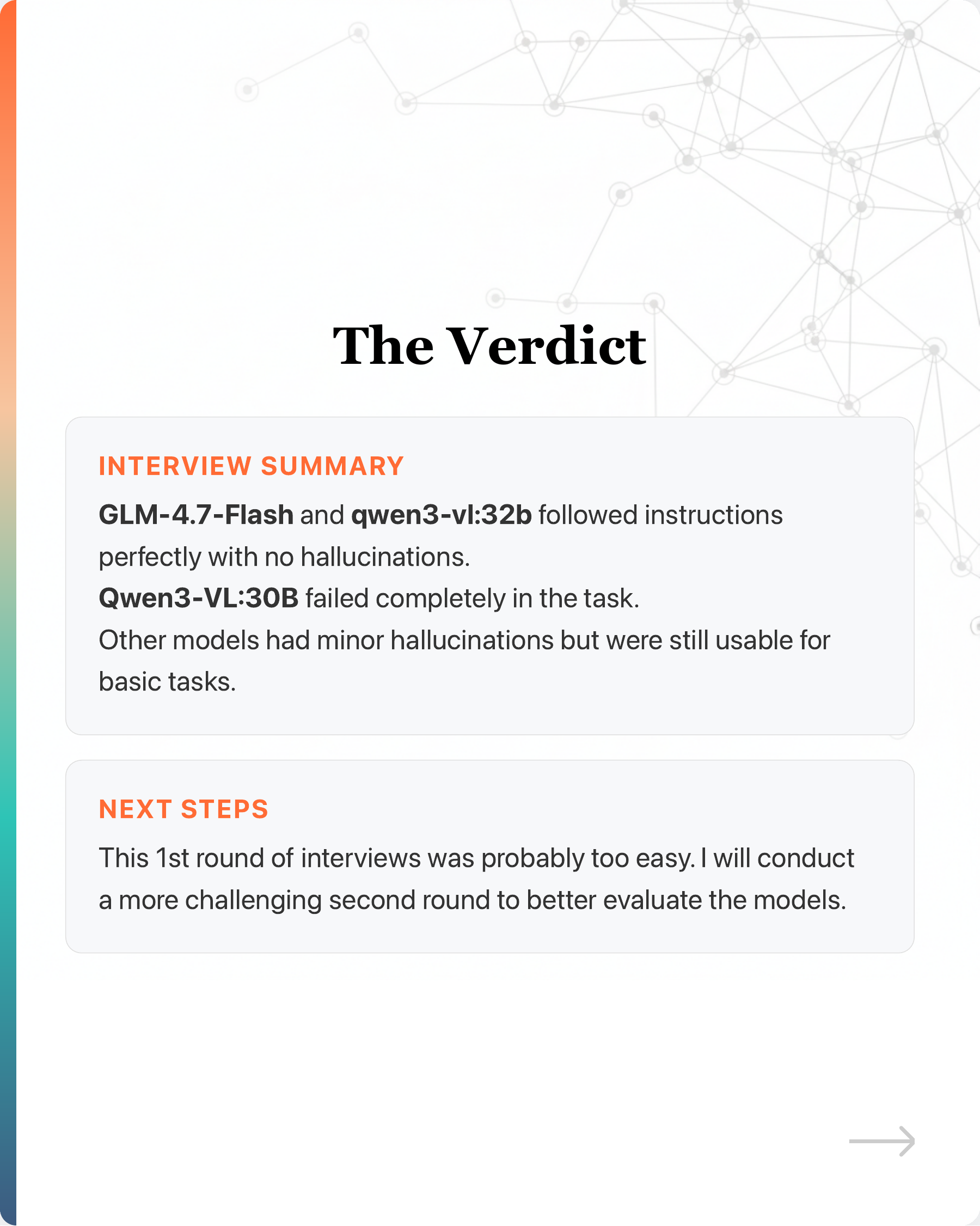

Swipe the carousel to see the results.

Who would you hire?

#LocalLLM #ClaudeCode #VibeCoding

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in Vibe Coding

Cursor's Pricing Changes Caused an Uproar

They have to do it because subsidizing the market with cheap tokens is not sustainable in the long run.

Can LLMs like ChatGPT do reasoning? It failed my casual tests in under 30 minutes.

… more

Axios’ Sara Fischer in conversation with Cloudflare’s Matthew Prince

For the past 20 years, we have benefited from "free" content on the Internet.

DeepWiki: AI-Generated Docs for Any GitHub Repo

If you're using open-source software, one of the most common problems is outdated or poor documentation.

Can AI really write production-quality code?

Here's a chance to peek how it is used in an actual project.

OpenClaw Is One of the Most Expensive Ways to Do AI Automation

Not because the software is expensive. It is free, MIT open source. The problem is the tokens it burns to do anything. And the hype is what has turned it int...

Cursor's Pricing Changes Caused an Uproar

They have to do it because subsidizing the market with cheap tokens is not sustainable in the long run.

DeepWiki: AI-Generated Docs for Any GitHub Repo

If you're using open-source software, one of the most common problems is outdated or poor documentation.

Can LLMs like ChatGPT do reasoning? It failed my casual tests in under 30 minutes.

… more

Axios’ Sara Fischer in conversation with Cloudflare’s Matthew Prince

For the past 20 years, we have benefited from "free" content on the Internet.

Can AI really write production-quality code?

Here's a chance to peek how it is used in an actual project.

OpenClaw Is One of the Most Expensive Ways to Do AI Automation

Not because the software is expensive. It is free, MIT open source. The problem is the tokens it burns to do anything. And the hype is what has turned it int...