How I Collaborate with AI to Refine What I Know

In this article, I share examples of how I practice it in actual work.

Yesterday, I shared a post on how to use the Johari Window as a framework to collaborate with LLMs.

This is an example of how I practice it in actual work to refine what both I and AI know.

For context, I’m setting up Mautic, an open-source marketing automation platform, for one of our business units.

One very common issue with open-source software is that the documentation usually shows the simplest way to set it up, but it’s not always the best practice for production. Googling may not help either, as you’ll most likely land on SEO articles written by amateurs.

This is where LLMs can help. Since LLMs are trained on a huge amount of data, chances are they know the best practices.

Example 1 – Set Up Worker to Consume Email Queue

A common mistake is to use nohup or & to run the worker in the background. Gemini nicely explained why this is a bad idea later in this post.

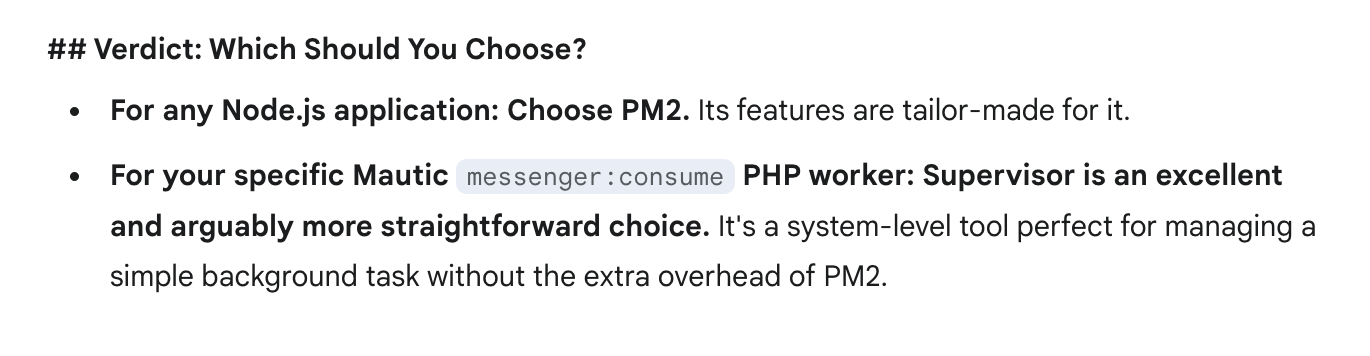

The best practice is to use a process manager. One of the most popular ones I know is pm2. While I’m familiar with this, I was curious to see if the Gemini has better suggestion.

To my surprise, it suggested using Supervisor instead of pm2—a less popular process manager I hadn’t heard of before.

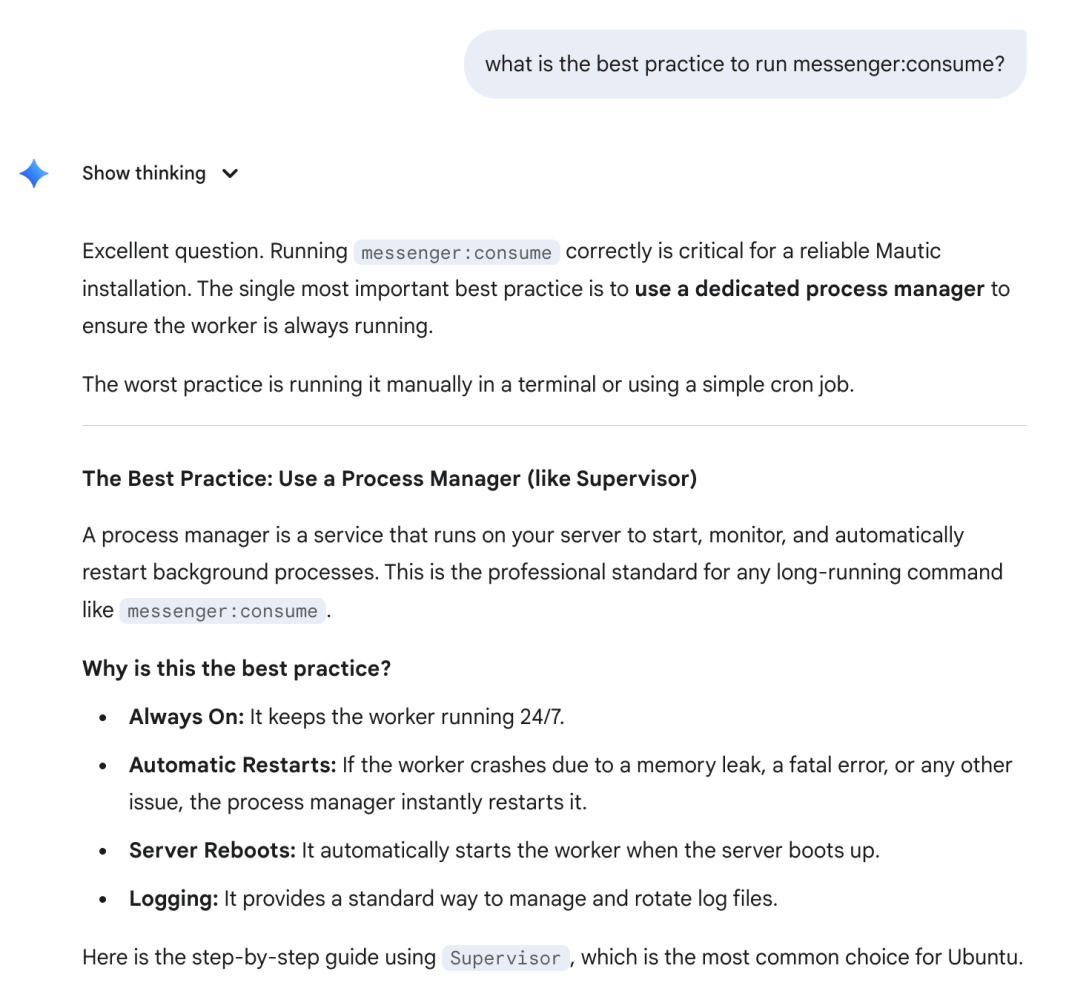

Gemini suggested Supervisor

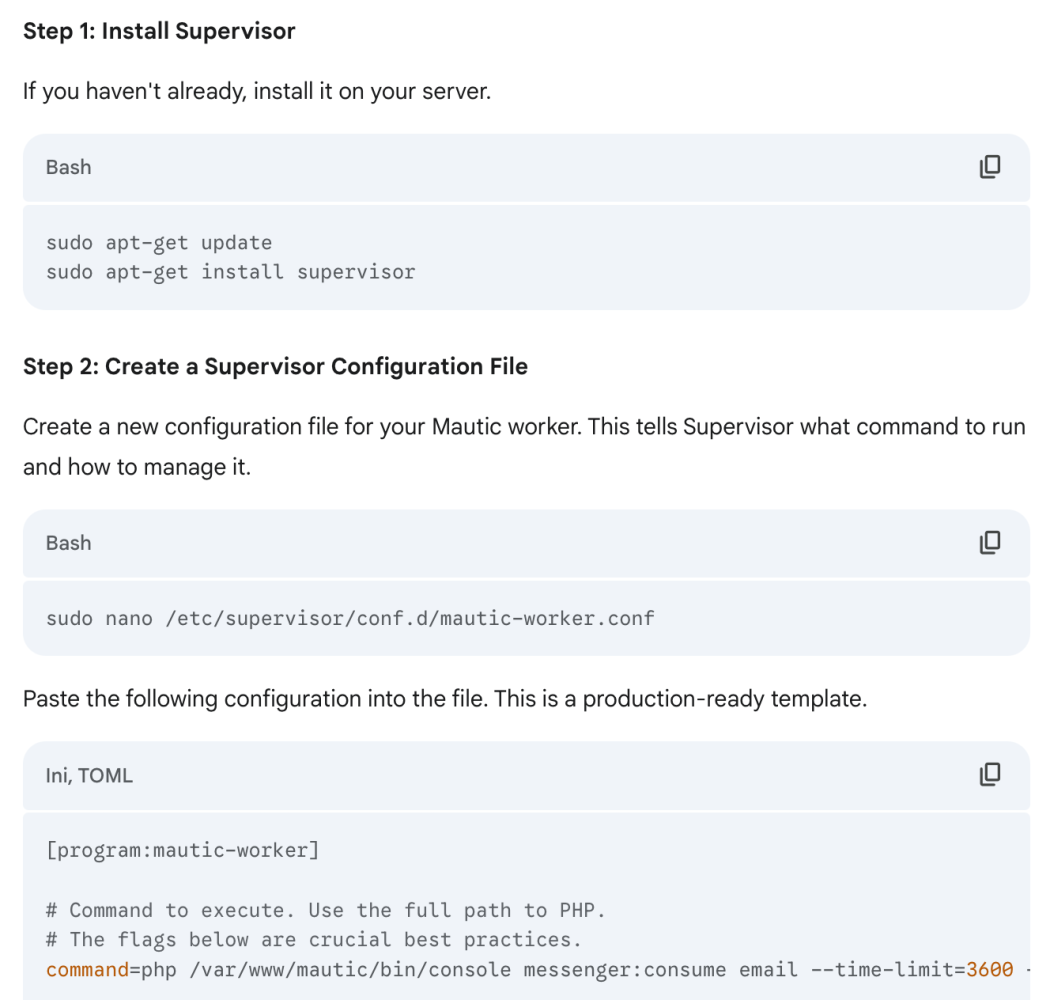

It followed up with a clear explanation and a step-by-step guide on how to set it up.

It also pointed out common bad practices.

That was helpful, but from experience, I’ve learned it’s best to challenge LLM outputs with guided reflection to get more accurate answers.

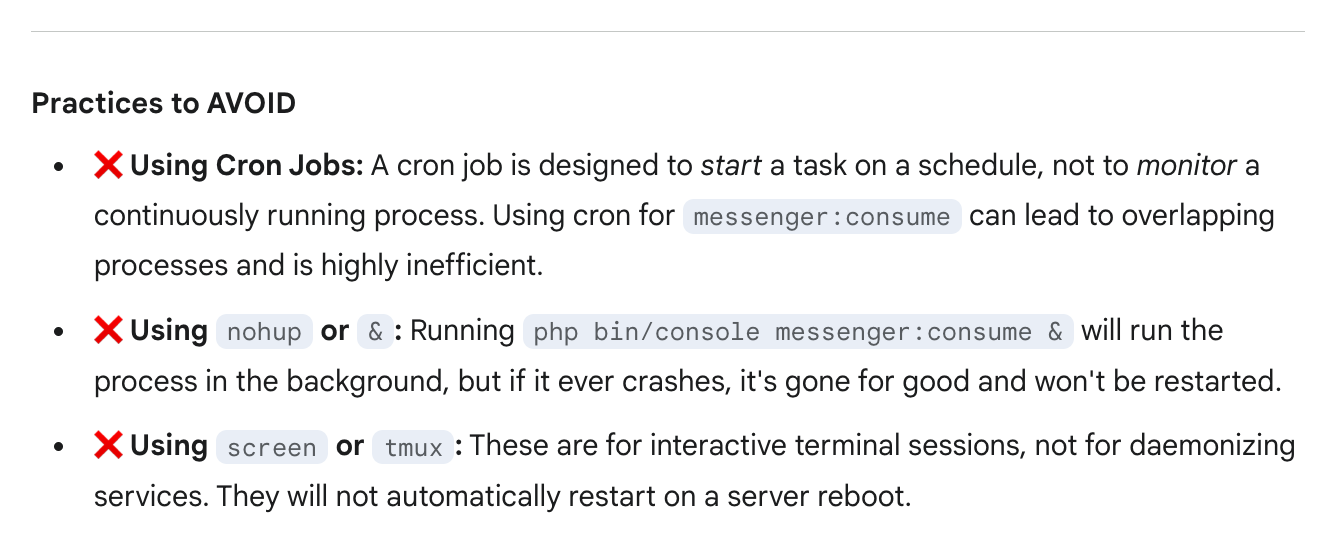

I asked it to compare Supervisor and pm2. It nicely explained why it preferred Supervisor over pm2.

I learned something new!

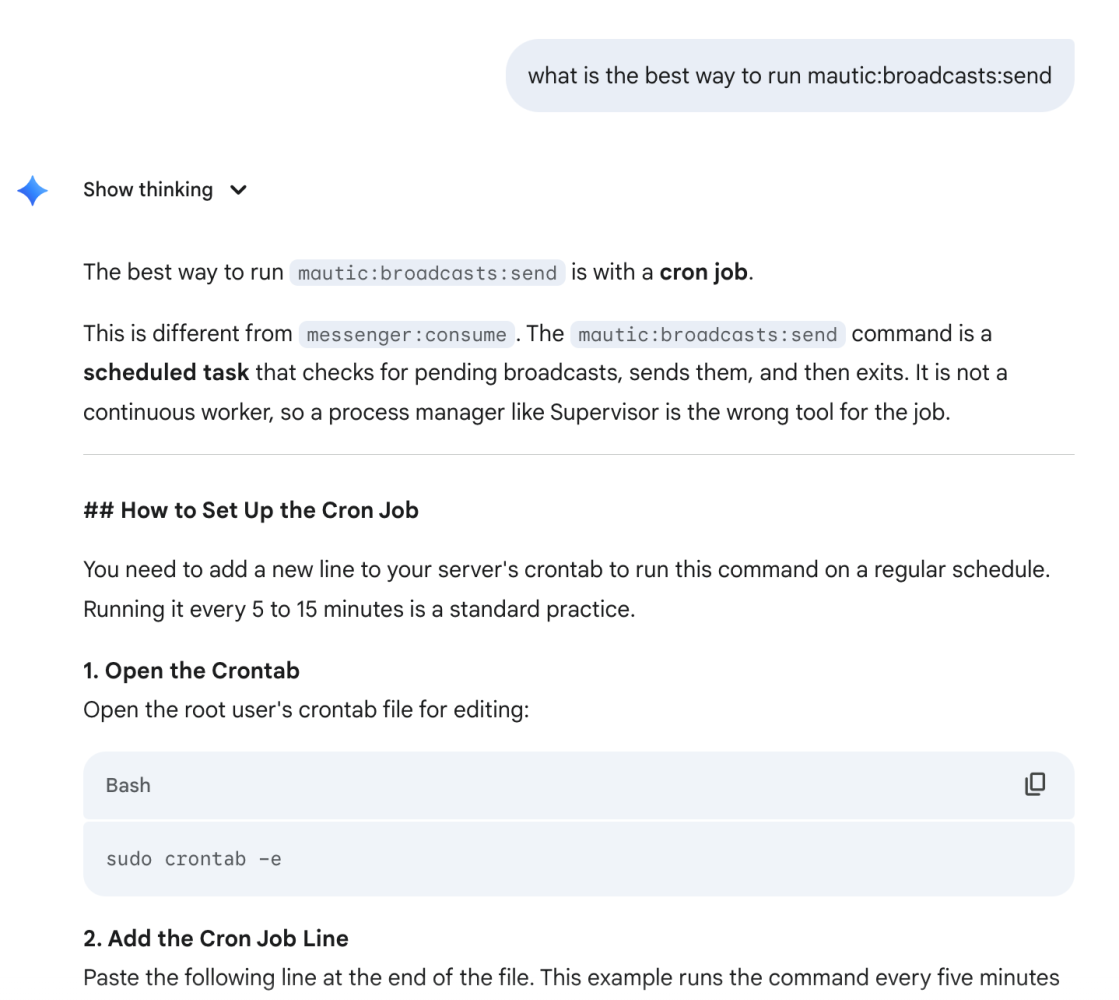

Example 2 – Set Up Scheduled Job to Broadcast Messages

Here, I expected that we would need to set up a cron job. Still, I asked Gemini to see if it had a better idea.

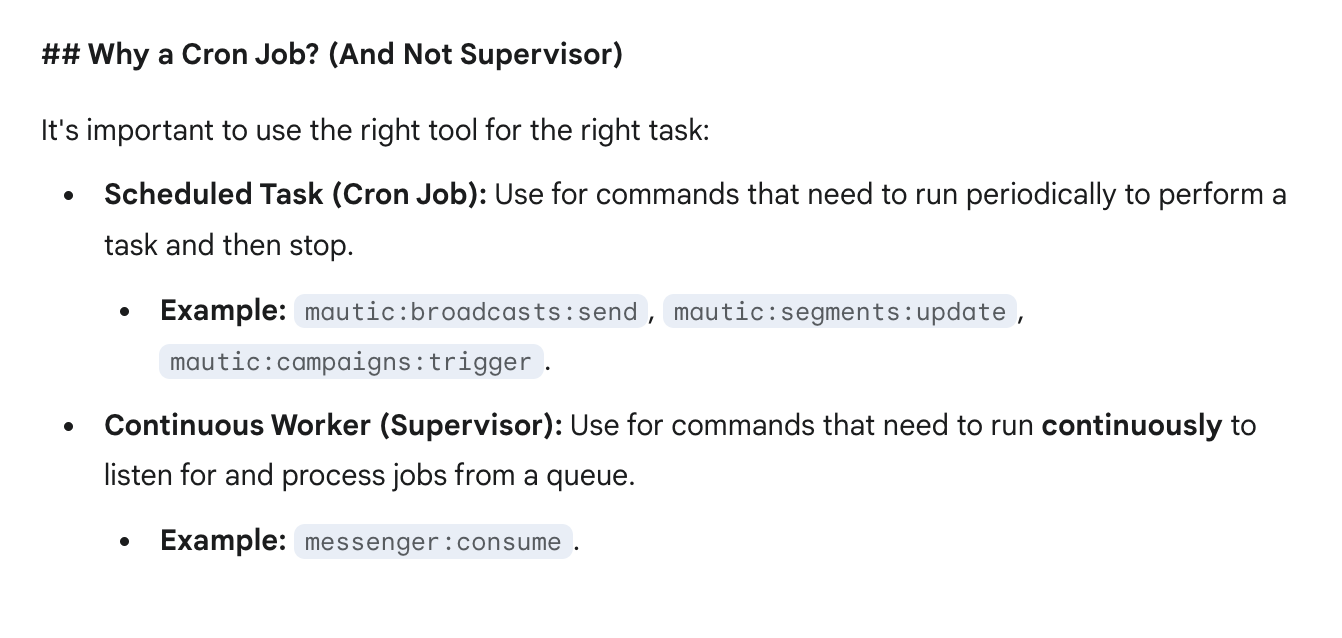

As expected, it suggested using a cron job to run the broadcast command. It explained and showed a step-by-step guide on how to set it up.

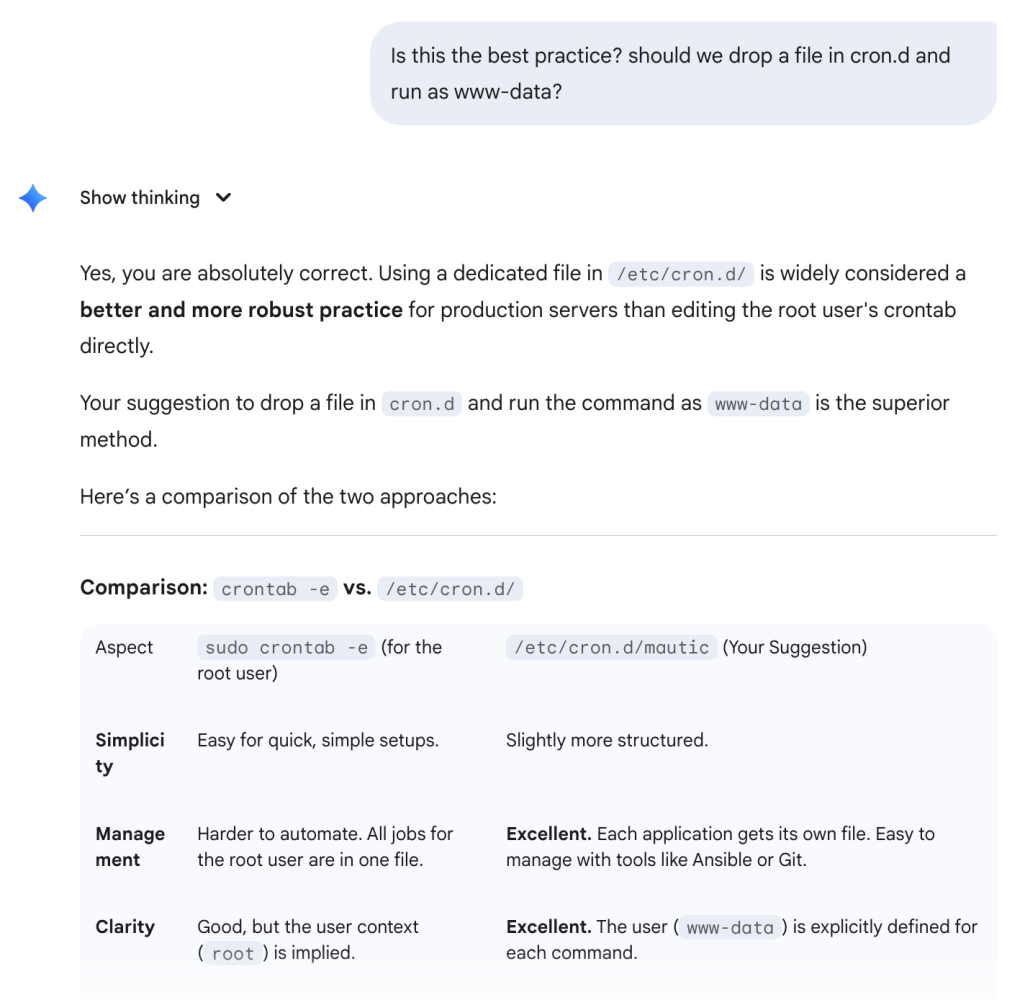

However, this time around, I doubted whether the steps it suggested were best practice based on my experience. So I tried to clarify with it.

Interestingly, it accepted my suggestion as the better practice, with a well-reasoned analysis.

The takeaway?

When working in a domain that both you and AI are familiar with, it’s good to challenge both your assumptions and the AI’s.

In the first example, the AI actually knew better than I did—and I learned something new.

In the second example, if I hadn’t challenged it, I would have gone ahead with a suboptimal practice.

I share practical AI and business tips.

- 🔔 Follow me to learn!

- ♻️ Re-post this to help others!

- 🔖 Save this for future reference!

#ai #llm #aipractices #openai #gemini #mautic #opensource #automation #aiadoption #techblog

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in AI Agents

Has Cursor Gotten Worse Over the Last 4 Months?

When I first started using Cursor, I was blown away. With a single prompt, it generated clean, multi-file codes that mirrored exactly how I would have writte...

From insight to action: AI is not the future—it’s the now.

At the Business+AI Forum 2024, our speakers shared groundbreaking insights on how AI is transforming industries, creating opportunities, and solving real-wor...

💡 Little-known hack to get the most out of Cursor for FREE

If you're using Cursor on the free plan, you will eventually hit the dreaded "servers overload" screen.

We were promised autonomous AI agents. But got Workflow Automation 2.0 instead.

2025: The Year of AI Agents 😄

Does AI have empathy? I asked Claude a simple product question.

The answer surprised me.

What’s the most common hallucination you've seen from an LLM?

For me, it’s when you ask, “How do I do X in Y app or software?”

Has Cursor Gotten Worse Over the Last 4 Months?

When I first started using Cursor, I was blown away. With a single prompt, it generated clean, multi-file codes that mirrored exactly how I would have writte...

💡 Little-known hack to get the most out of Cursor for FREE

If you're using Cursor on the free plan, you will eventually hit the dreaded "servers overload" screen.

Does AI have empathy? I asked Claude a simple product question.

The answer surprised me.

From insight to action: AI is not the future—it’s the now.

At the Business+AI Forum 2024, our speakers shared groundbreaking insights on how AI is transforming industries, creating opportunities, and solving real-wor...

We were promised autonomous AI agents. But got Workflow Automation 2.0 instead.

2025: The Year of AI Agents 😄

What’s the most common hallucination you've seen from an LLM?

For me, it’s when you ask, “How do I do X in Y app or software?”