Can DeepSeek R1 Run on a Normal Laptop for Free?

TL;DR

Quick Answer: Unfortunately, not yet.

TL;DR

- You can’t run a competent language model on a normal laptop yet. DeepSeek R1 is powerful but requires 400GB of memory, making it impossible to run on normal laptop.

- Most tutorials are misleading. They showcase distilled versions (1.5B–70B parameters) that are much weaker than the full model.

- Small models hallucinate a lot. They often make up facts and struggle with basic reasoning tasks.

- They fail at simple problem-solving. Even a Primary 2 homework question stumped most small models.

- There is hope for improvement. Advances in DeepSeek R1 suggest future distilled models may become more reliable.

🚀 For now, if you need a strong LLM, you still need cloud-based or high-end hardware solutions.

The Misleading Hype

With all the excitement around DeepSeek, you may have come across articles claiming that DeepSeek surpasses ChatGPT-o1, as well as others suggesting you can run DeepSeek on a normal laptop. When you put these claims together, it seems to imply that you can now run an LLM as capable as ChatGPT-o1 on your laptop. The bad news? This is misleading.

The Reality

DeepSeek R1 is undoubtedly very capable and can rival ChatGPT-o1 in reasoning ability. However, the full model, which has 671B parameters, requires around 400GB of memory to run. So, running it on your normal laptop is out of the question.

While many video tutorials demonstrate how to run DeepSeek with Ollama on a laptop, many of them are misleading. What they actually run is a distilled version of DeepSeek R1, based on smaller models like Qwen and Llama, with parameter sizes ranging from 1.5B to 70B. You can think of these distilled models as apprentices of DeepSeek R1. Their reasoning capabilities are significantly reduced.

The Limitations of Small Language Models

While small language models (SLMs) are efficient and can run on normal laptops, one of their biggest issues is hallucination.

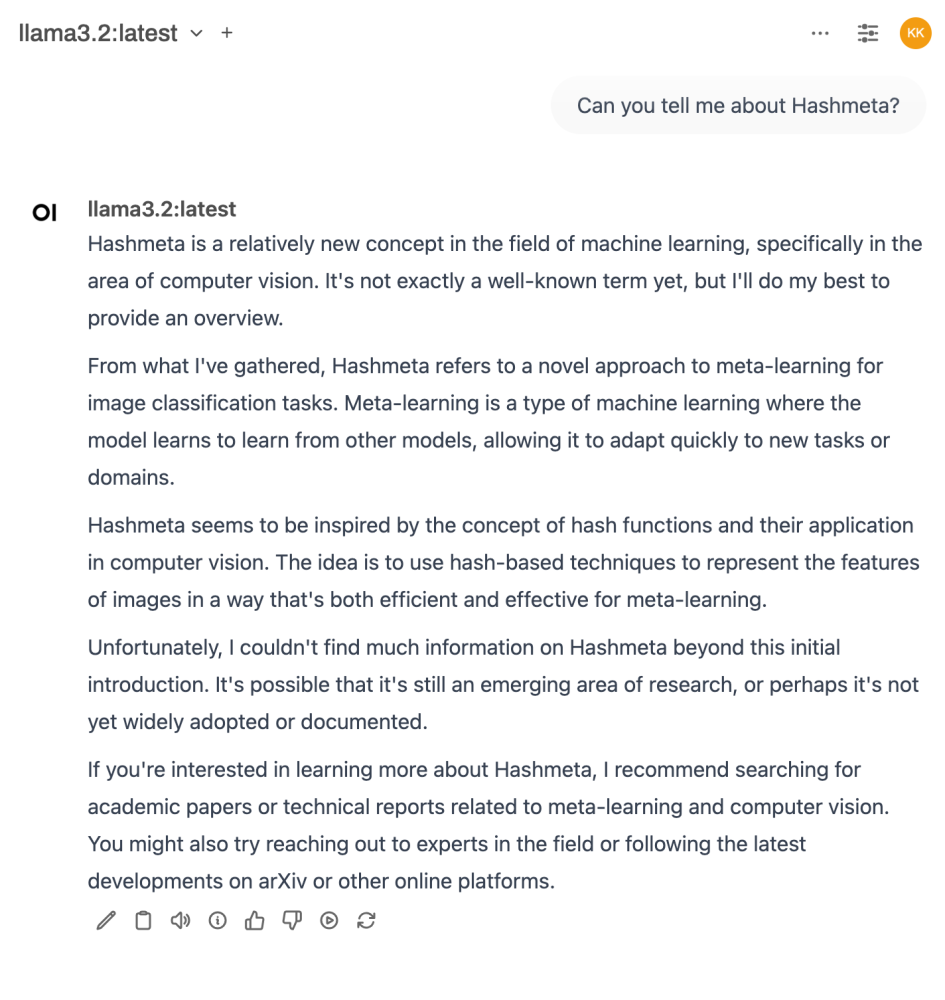

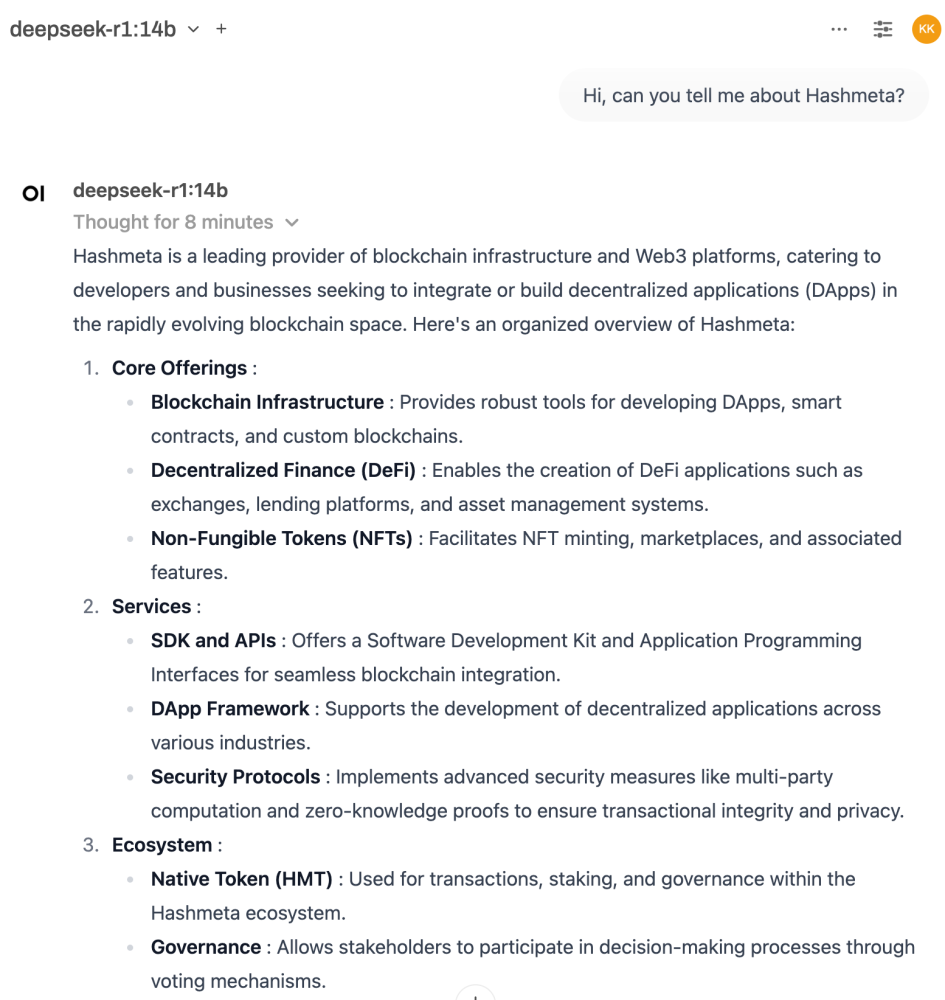

For example, see the screenshot below where Llama 3.2-3B completely fabricated a definition of Hashmeta Group.

Another major limitation is that they may not be intelligent enough to solve even simple problems.

Is There Still Hope?

The breakthroughs in DeepSeek R1 claim to bring significant improvements in smaller distilled models. So, I was curious to see if I could now run a “competent” small language model on my laptop.

Of course, everyone has a different definition of “competent.” For me, it means:

- It does not hallucinate easily.

- It can, at the very least, solve my kid’s Primary 2 homework.

The Hallucination Test

When asked about Hashmeta, the DeepSeek-R1:14B model fabricated an answer, claiming that Hashmeta is a leading provider of blockchain infrastructure and Web3 platforms. Clearly, it failed the hallucination test.

Solving My Kid’s Primary 2 Homework

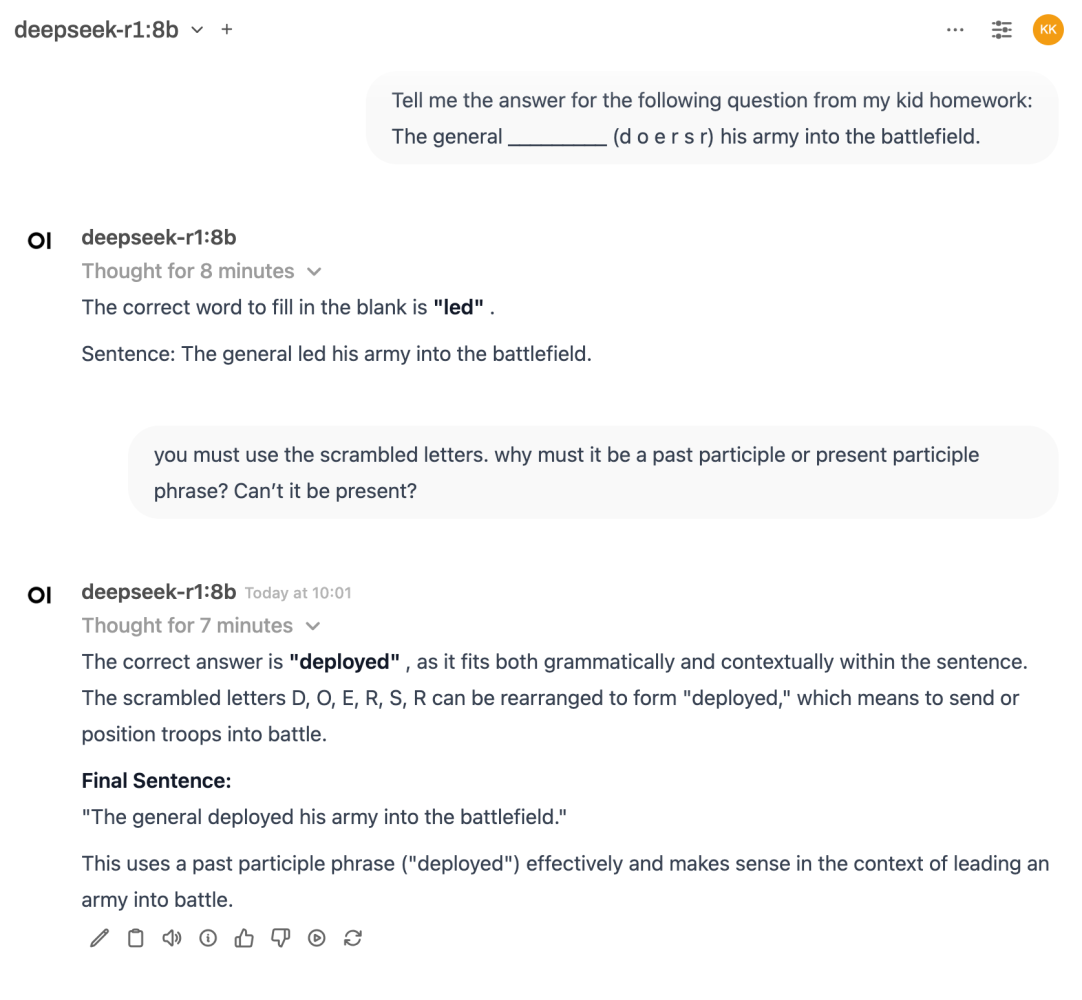

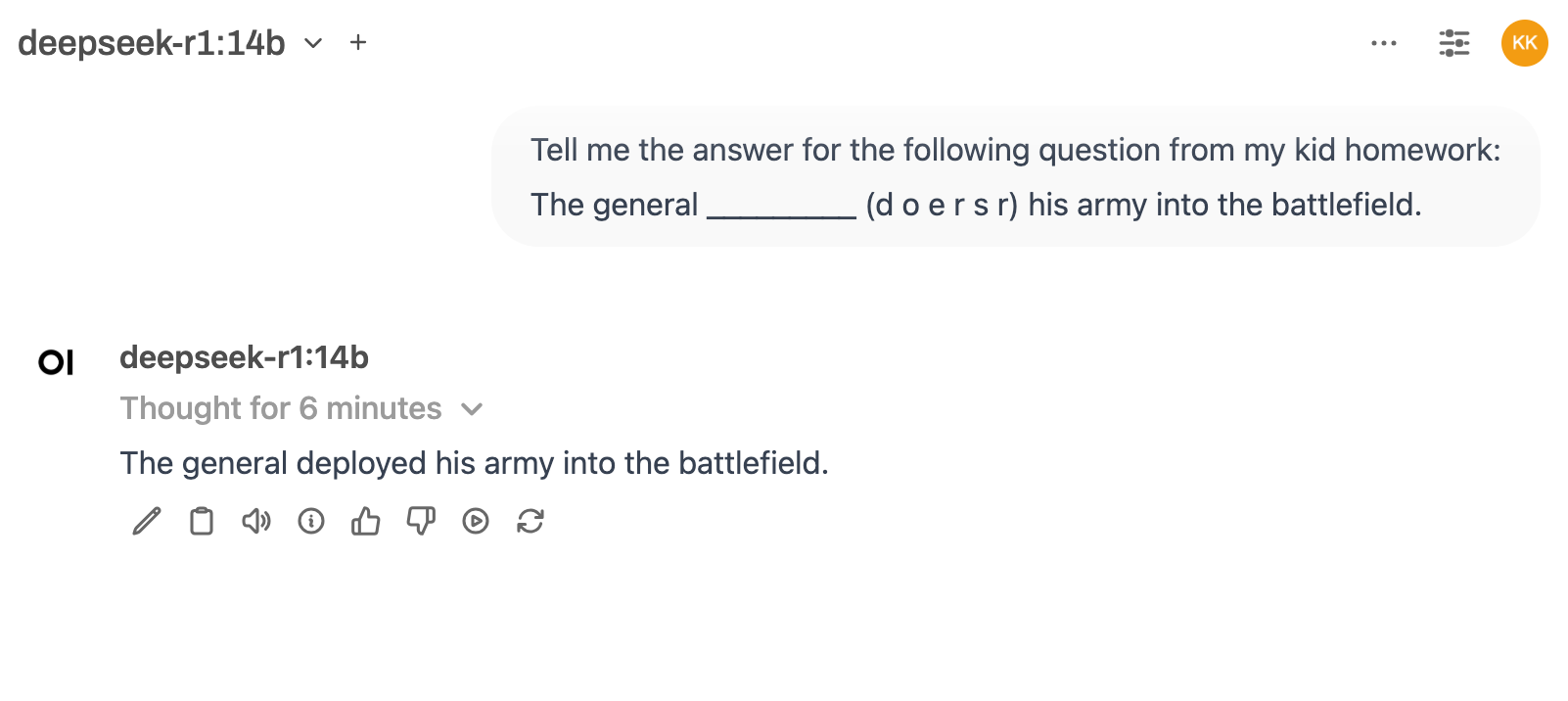

The homework required filling in the blank using the scrambled letters provided:

📌 The general _________ (d o e r s r) his army into the battlefield.

(Fun Fact: Gemini 1.5 Flash failed this test previously! Read this post)

Here’s how different models performed:

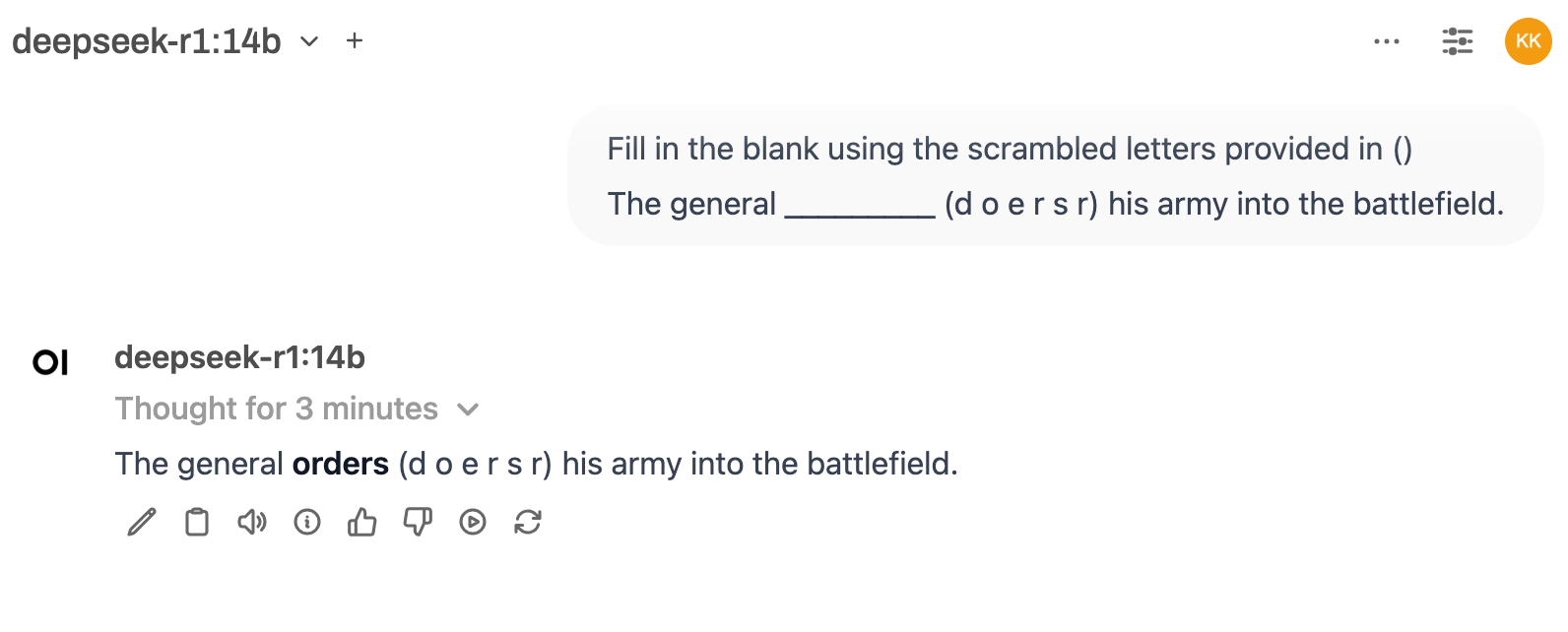

8B & 14B models:

Failed. The 8B model insisted the word must be in past participle form based on the thought process.

It actually tried “ORDERED” but dismissed it because it thought the letters didn’t match.

The 14B model insisted the first letter must be ‘d’ in the thought process.

14B model (with better prompting):

Eventually got the answer right.

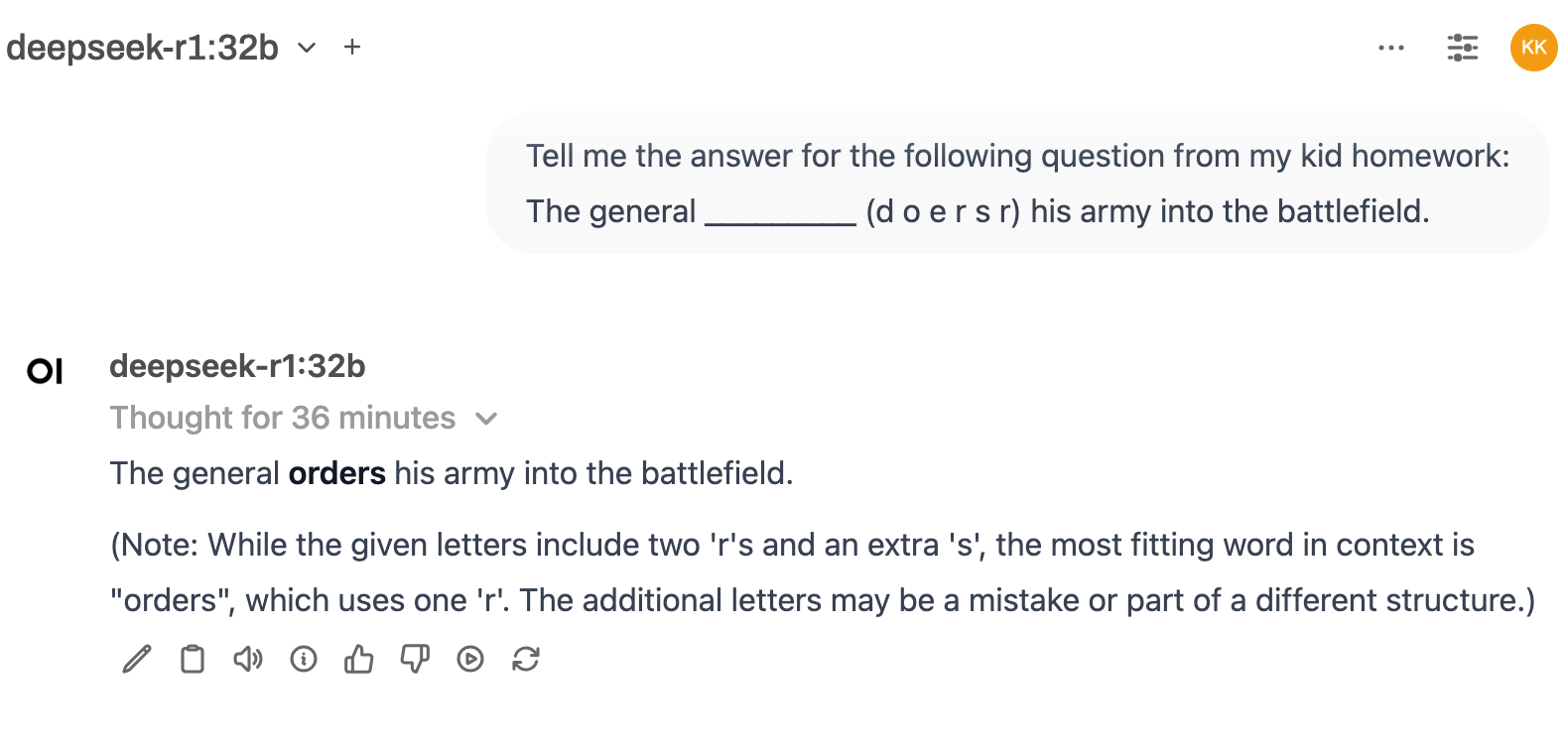

32B model:

Got the answer right.

However, its reasoning in the additional notes was flawed.

The Conclusion

No, you still can’t run a competent language model on a normal laptop yet. But at least it was better than the previous generation of SLMs. Let’s hope for the next breakthrough!

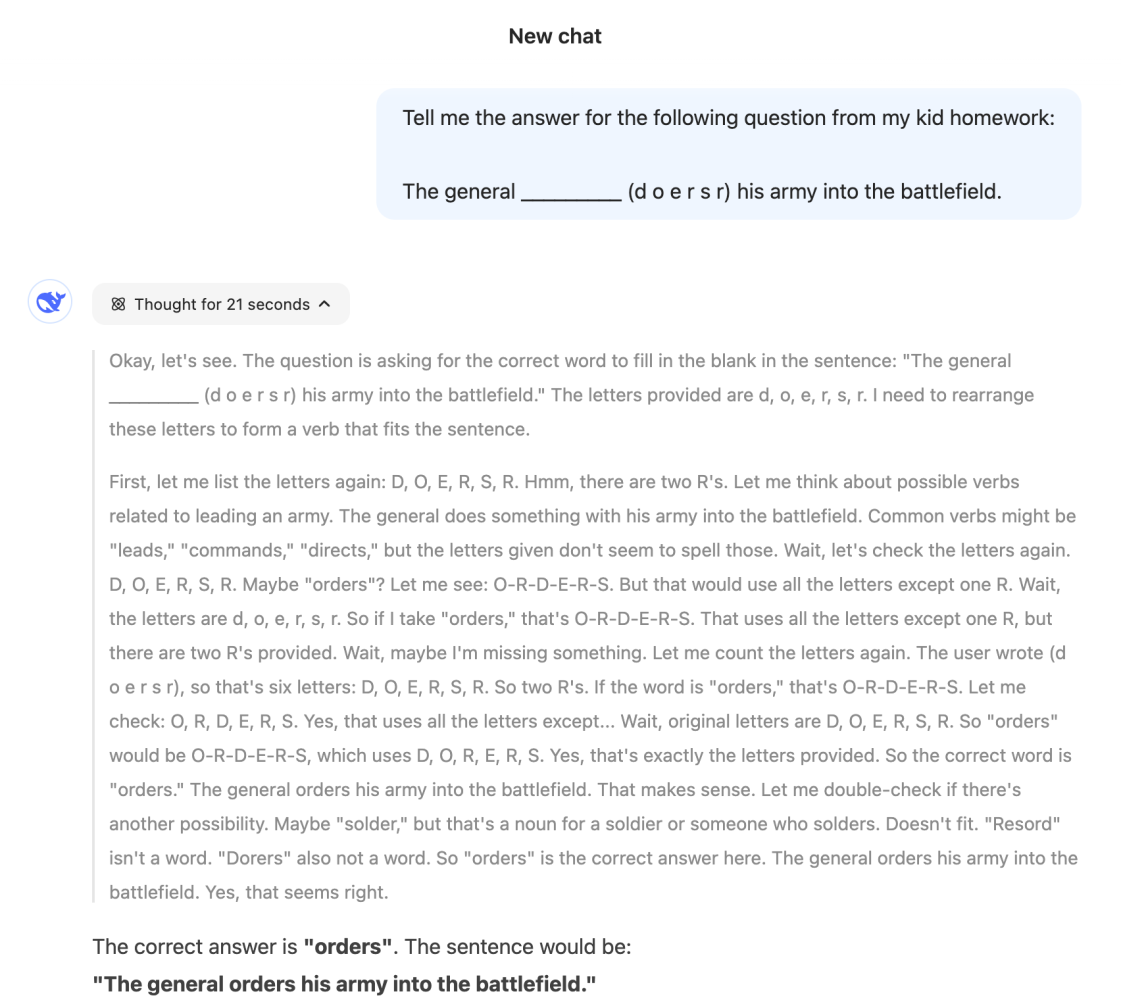

Bonus Test

I tested the full model on DeepSeek.com. Unsurprisingly, it got the answer right. However, interestingly, when examining its thought process, you can see that it miscounted the letter ‘r’ a few times before finally getting it right.

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in AI Agents

I am attending the Agentic AI Conference by Data Science Dojo on May 27 and 28, 2025.

The conference speakers include thought leaders in industry who will talk about all aspects of building agentic AI applications - covering everything from cu...

Does Qwen 3.5 live up to the hype?

I tested 9 local LLMs on a Claude Code skill I actually use every day. Not a coding benchmark. A real multi-step agentic task described in natural language a...

How we ranked #1 for "Xiaohongshu Marketing" in 90 days and generated $300K+ revenue since then.

Last year, while visiting China, Terrence Ngu Teck Kong found a new business opportunity for our marketing agency, Xiaohongshu Marketing.

Two Choices for Handling Tech Debt in Vibe Coding

· Go full vibe: ignore tech debt, and when things inevitably break, spend a week fixing it.

What is an AI agent?

If you're still confused, you're not alone. There is no universally agreed-upon definition of what an AI agent is.

I have a simple rule before I use AI to automate anything: Do it manually 3 times first.

--

I am attending the Agentic AI Conference by Data Science Dojo on May 27 and 28, 2025.

The conference speakers include thought leaders in industry who will talk about all aspects of building agentic AI applications - covering everything from cu...

How we ranked #1 for "Xiaohongshu Marketing" in 90 days and generated $300K+ revenue since then.

Last year, while visiting China, Terrence Ngu Teck Kong found a new business opportunity for our marketing agency, Xiaohongshu Marketing.

What is an AI agent?

If you're still confused, you're not alone. There is no universally agreed-upon definition of what an AI agent is.

I have a simple rule before I use AI to automate anything: Do it manually 3 times first.

--

Does Qwen 3.5 live up to the hype?

I tested 9 local LLMs on a Claude Code skill I actually use every day. Not a coding benchmark. A real multi-step agentic task described in natural language a...

Two Choices for Handling Tech Debt in Vibe Coding

· Go full vibe: ignore tech debt, and when things inevitably break, spend a week fixing it.