AI Coding Assistants Have a Security Blind Spot

A few months ago, I wrote about a non-technical founder whose SaaS got exploited right after he publicly showed his build process using Cursor (https://lnkd....

Tap a slide to expand

A few months ago, I wrote about a non-technical founder whose SaaS got exploited right after he publicly showed his build process using Cursor (https://lnkd.in/gNCyDgzt).

Attackers maxed out his API usage, bypassed subscriptions, and even messed with his database.

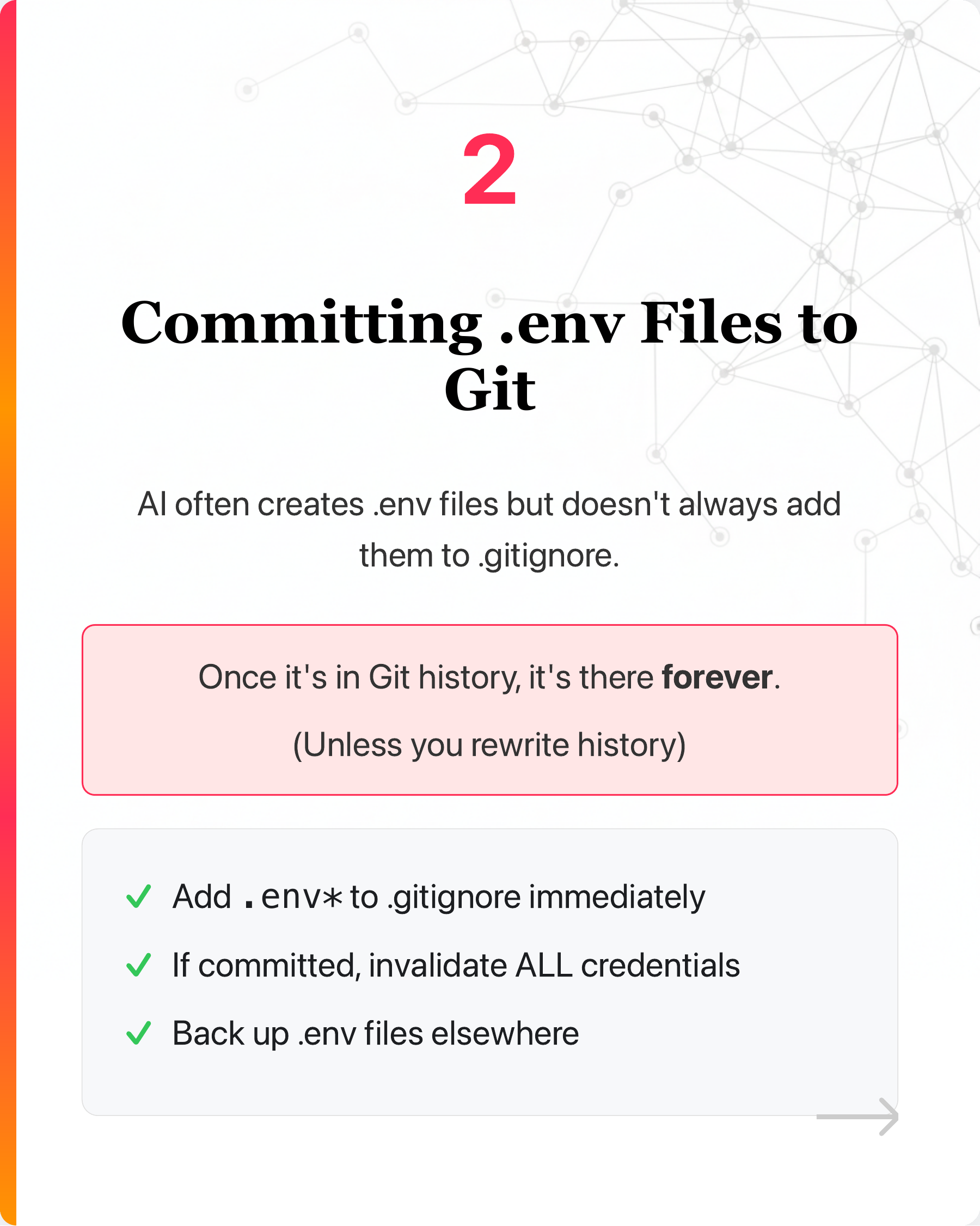

Since then, I have seen more examples of how they introduce security flaws to code.

Swipe the carousel to see 6 ways AI creates vulnerabilities ➡️

Including:

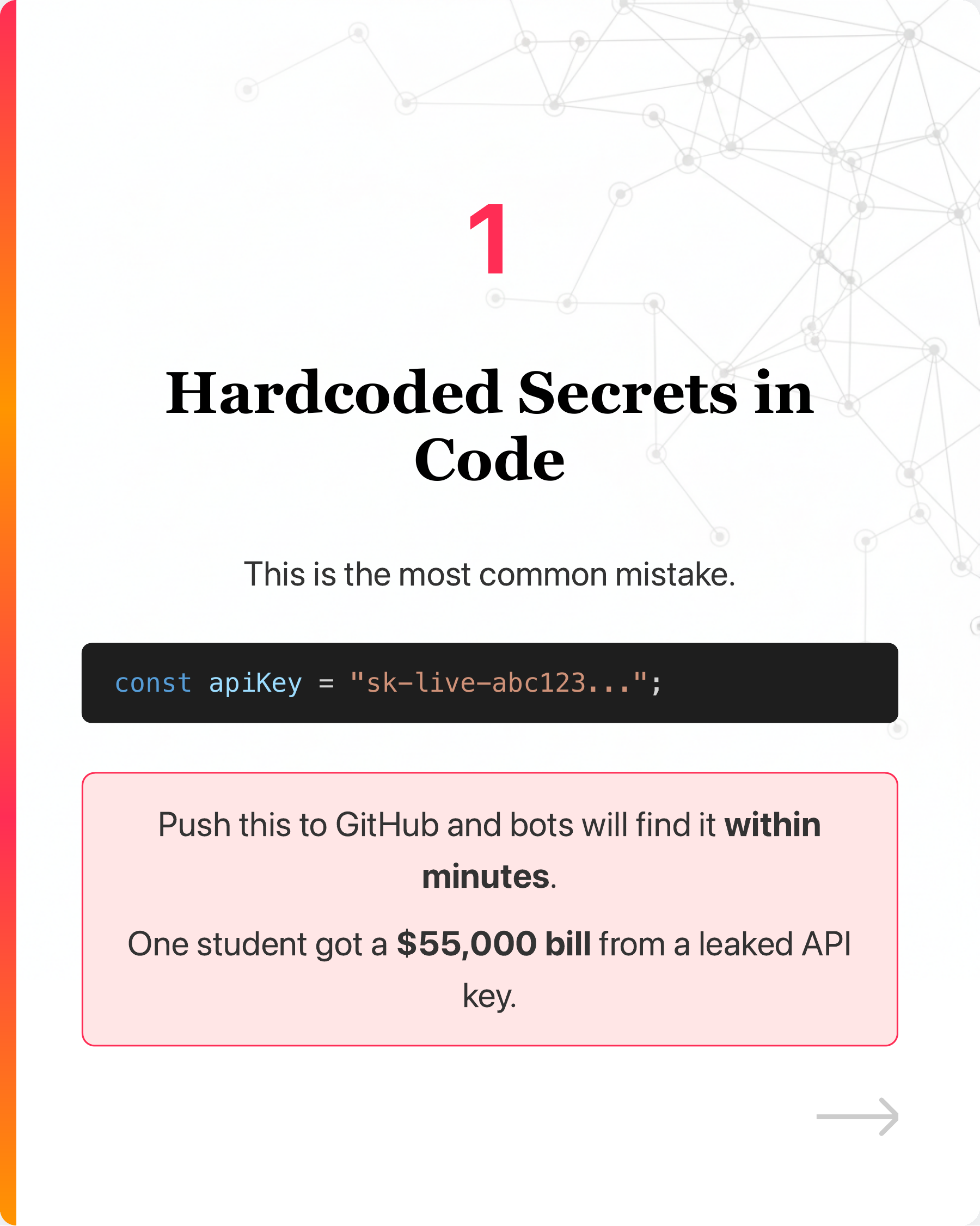

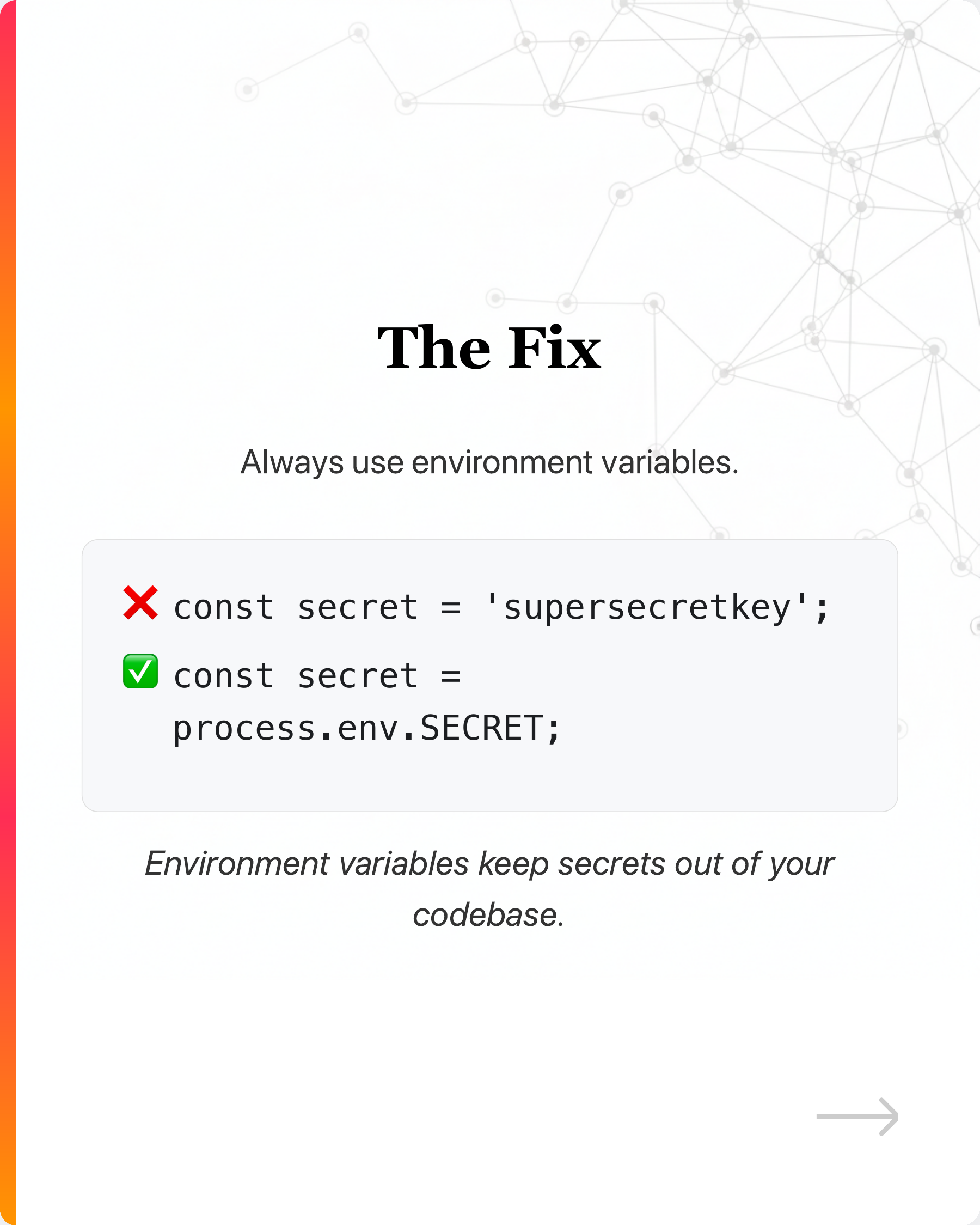

- Hardcoded secrets (a leaked key once cost a student $55k: https://lnkd.in/gF8khzKe)

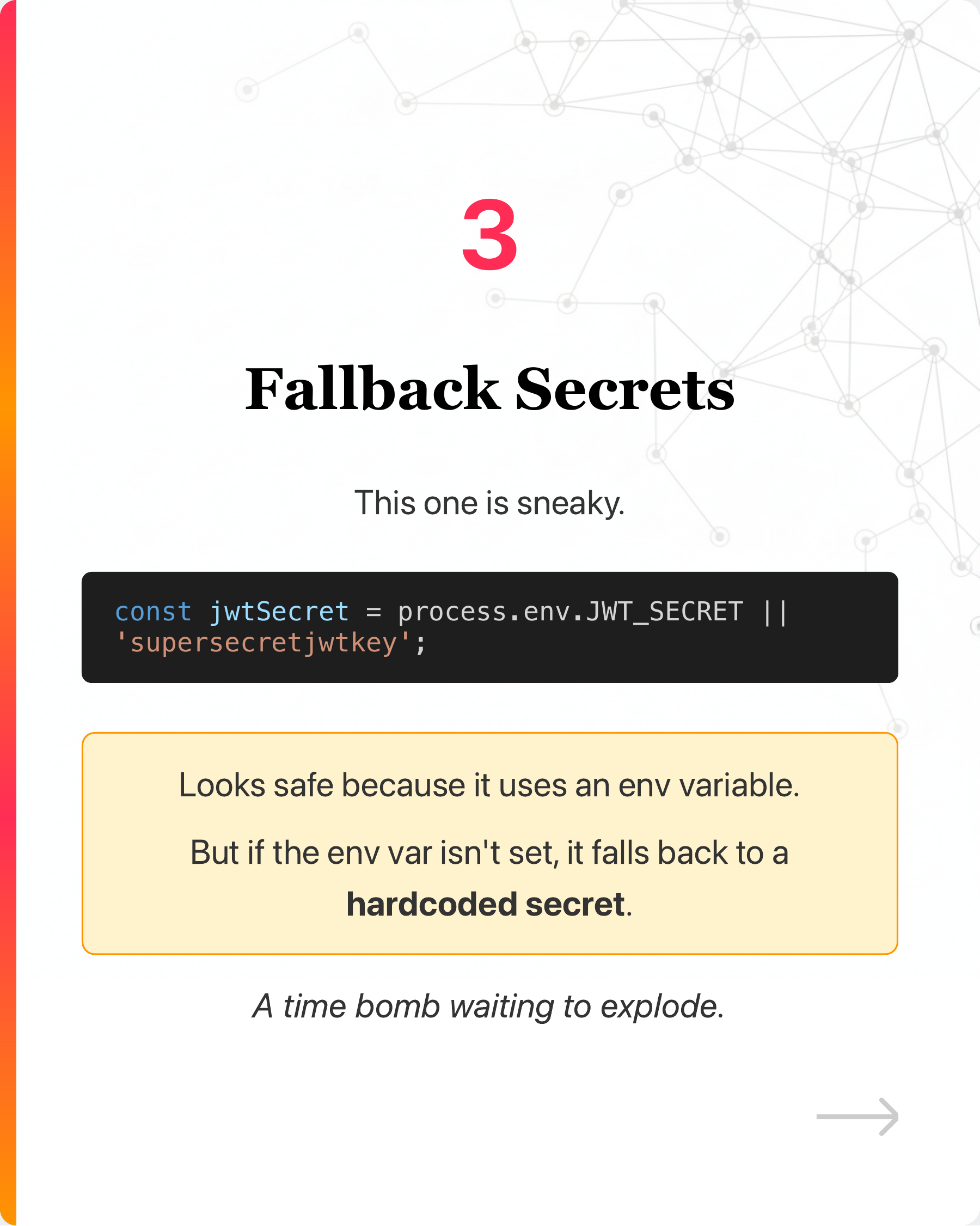

- Fallback secrets that look safe but aren’t (https://lnkd.in/ghpzjRAV)

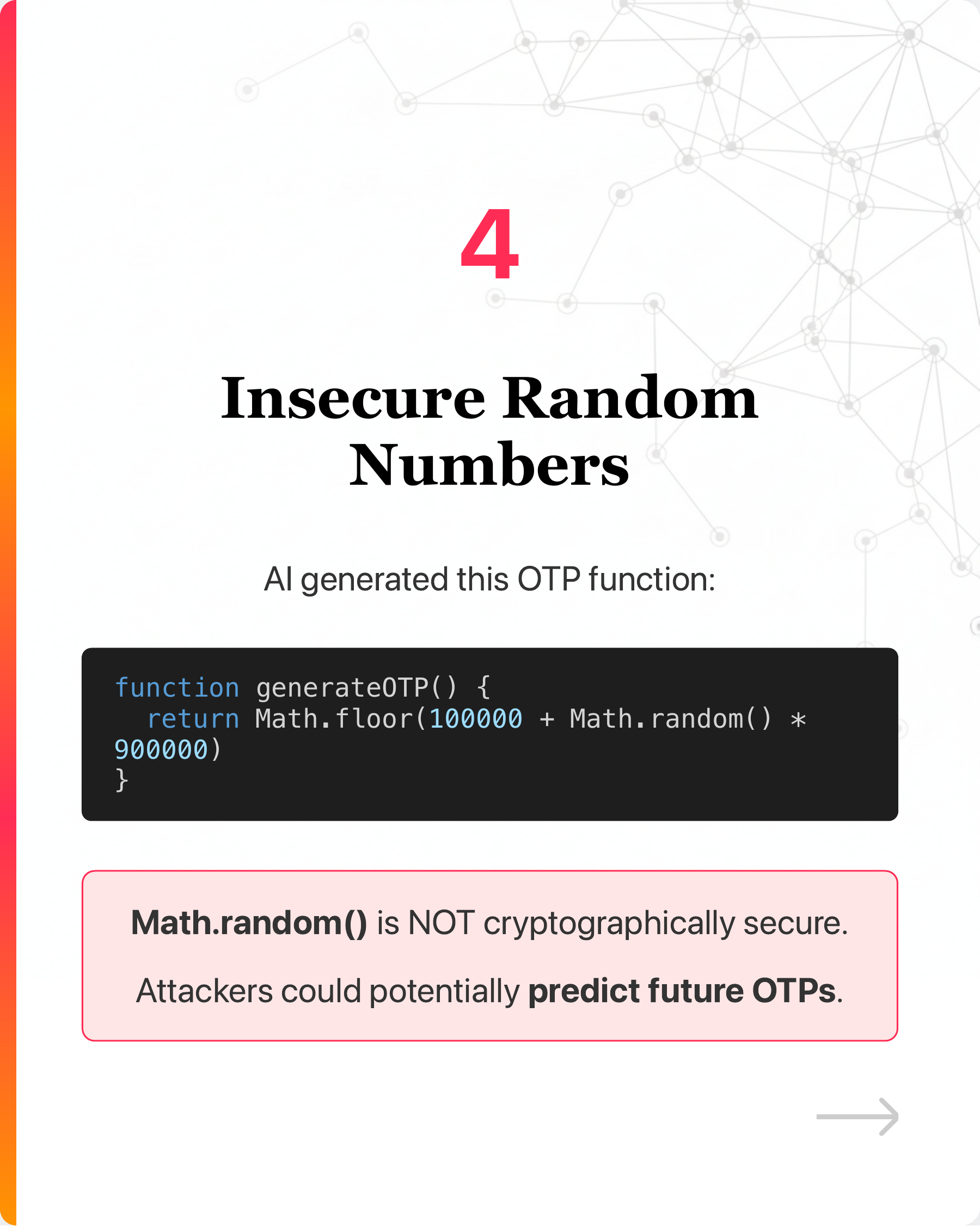

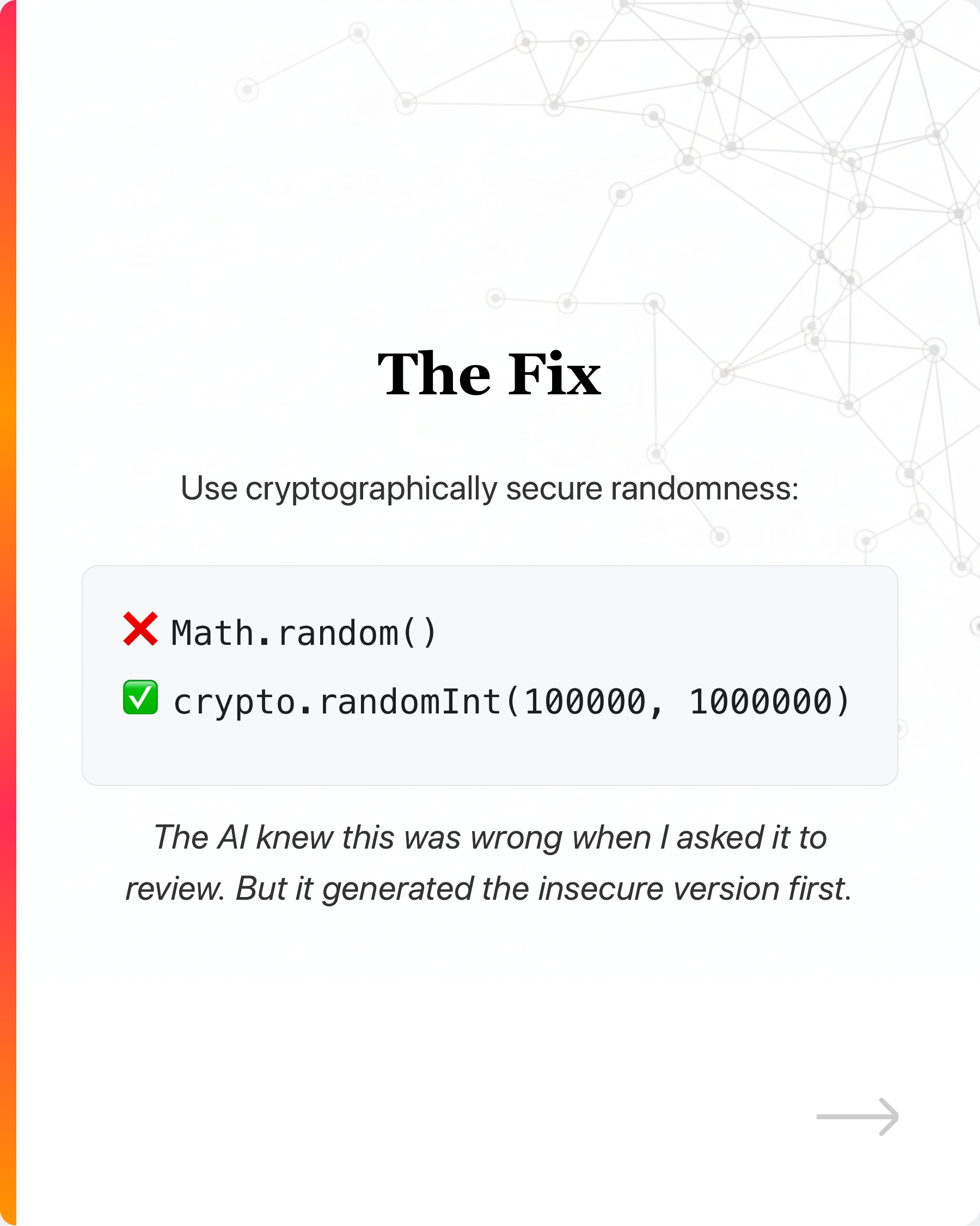

- Insecure random number generation

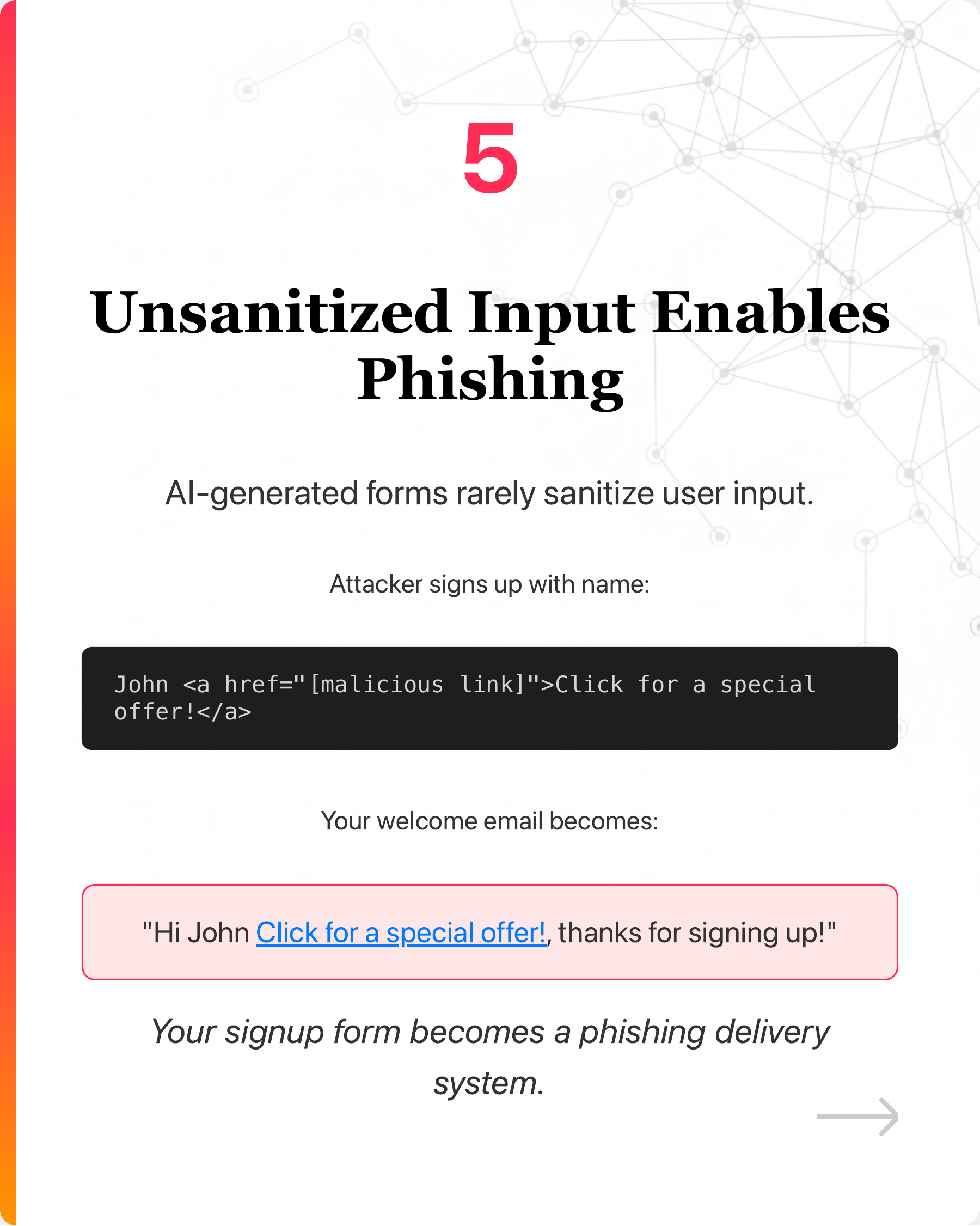

- Unsanitized input enabling phishing

- And more…

—

I created a security.md file you can drop into your project to guide your AI coding assistant based on these blind spots.

Comment “Security” and connect with me if you want a copy of the rules.

—

What security issues have you caught in AI-generated code?

—

I share practical tips about AI, coding and business. Follow me to learn more! Repost this to help others!

#AI #Security #VibeCoding

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in Vibe Coding

Can AI really write production-quality code?

Here's a chance to peek how it is used in an actual project.

"Guys, I’m under attack"

I came across this post where a founder shared how his SaaS got exploited right after he started sharing how he built his SaaS using Cursor.

💡 Little-known hack to get the most out of Cursor for FREE

If you're using Cursor on the free plan, you will eventually hit the dreaded "servers overload" screen.

I caught Cursor trying to be lazy.

The AI agent couldn’t solve the typing error, so it cast the variable to 'any' to suppress the error, just like a sloppy software engineer would.

Hot Take: Vibe Coding Won't Replace Software Engineers

Here, I share my journey from a strong believer to a skeptic.

We were promised autonomous AI agents. But got Workflow Automation 2.0 instead.

2025: The Year of AI Agents 😄

Can AI really write production-quality code?

Here's a chance to peek how it is used in an actual project.

💡 Little-known hack to get the most out of Cursor for FREE

If you're using Cursor on the free plan, you will eventually hit the dreaded "servers overload" screen.

Hot Take: Vibe Coding Won't Replace Software Engineers

Here, I share my journey from a strong believer to a skeptic.

"Guys, I’m under attack"

I came across this post where a founder shared how his SaaS got exploited right after he started sharing how he built his SaaS using Cursor.

I caught Cursor trying to be lazy.

The AI agent couldn’t solve the typing error, so it cast the variable to 'any' to suppress the error, just like a sloppy software engineer would.

We were promised autonomous AI agents. But got Workflow Automation 2.0 instead.

2025: The Year of AI Agents 😄