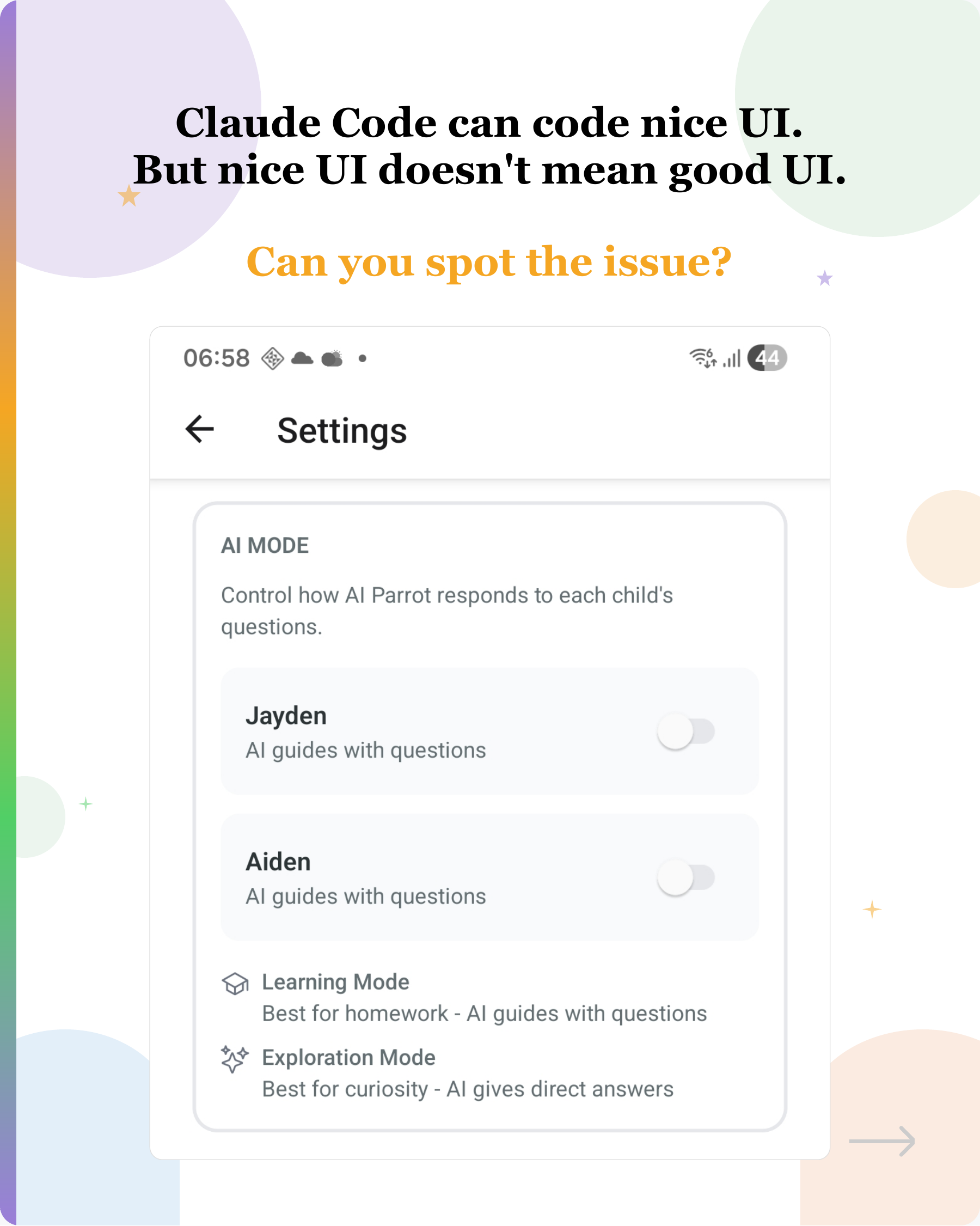

Claude Code can code nice UI. But nice UI doesn't mean good UI.

Manual UI testing is becoming one of my biggest bottlenecks when coding with AI now.

Tap a slide to expand

Manual UI testing is becoming one of my biggest bottlenecks when coding with AI now.

For any new feature, I find myself usually spending 80% of time doing manual UI testing.

These are two common issues I see:

- LLM sometimes makes poor judgment of what UI component to use.

When I asked Claude Code to add a feature to allow users to toggle the AI mode, image 1 is what it coded. It used an on/off switch for a choice between two modes. It looks decent, but ambiguous. What does ON mean? Turn ON Learning Mode or Explore Mode?

One way to solve this is probably giving it best practices on how to choose components as a skill?

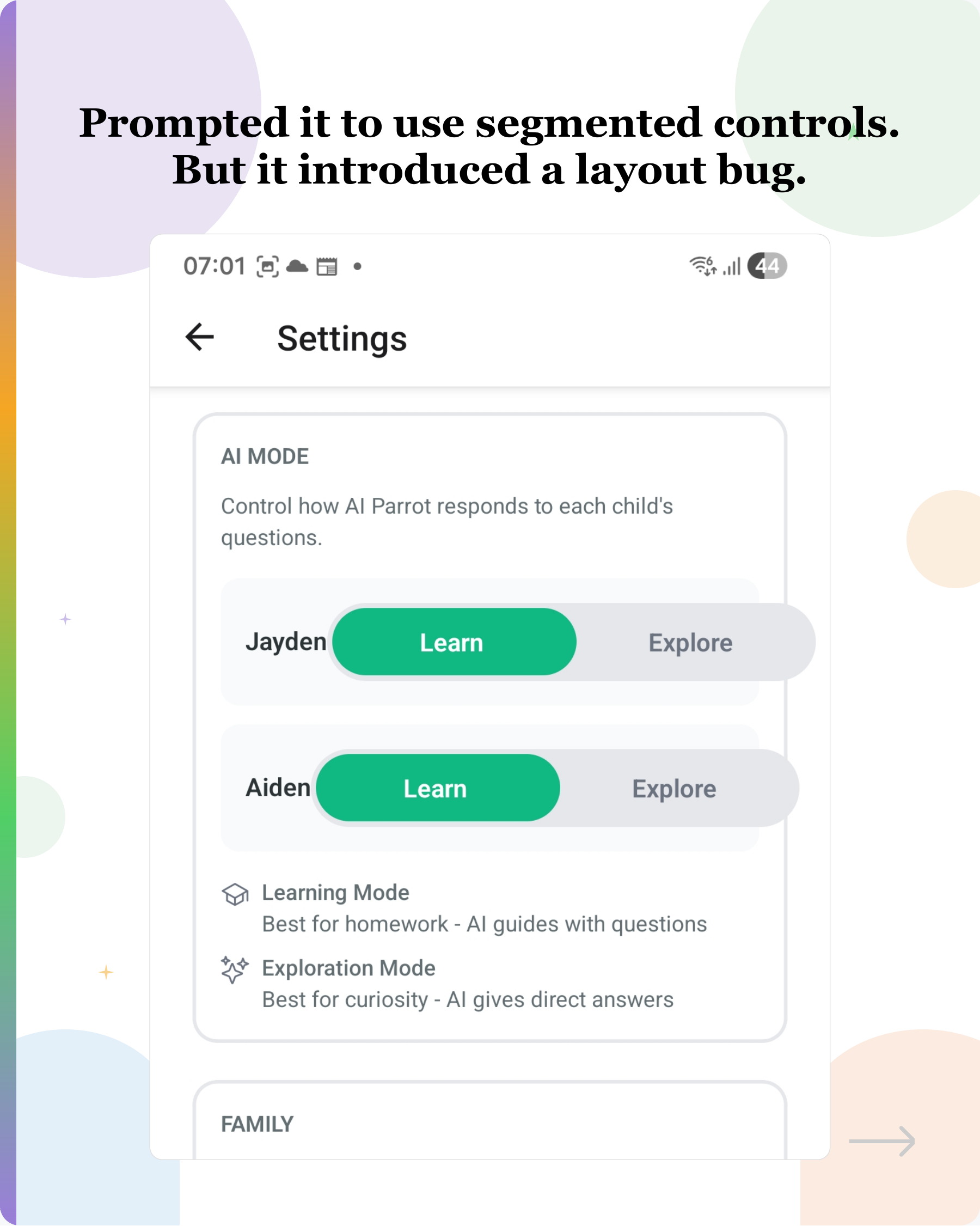

- LLM can’t see layout issue.

After prompting it about the ambiguity, I asked it to use a segmented control. But it introduced a layout issue.

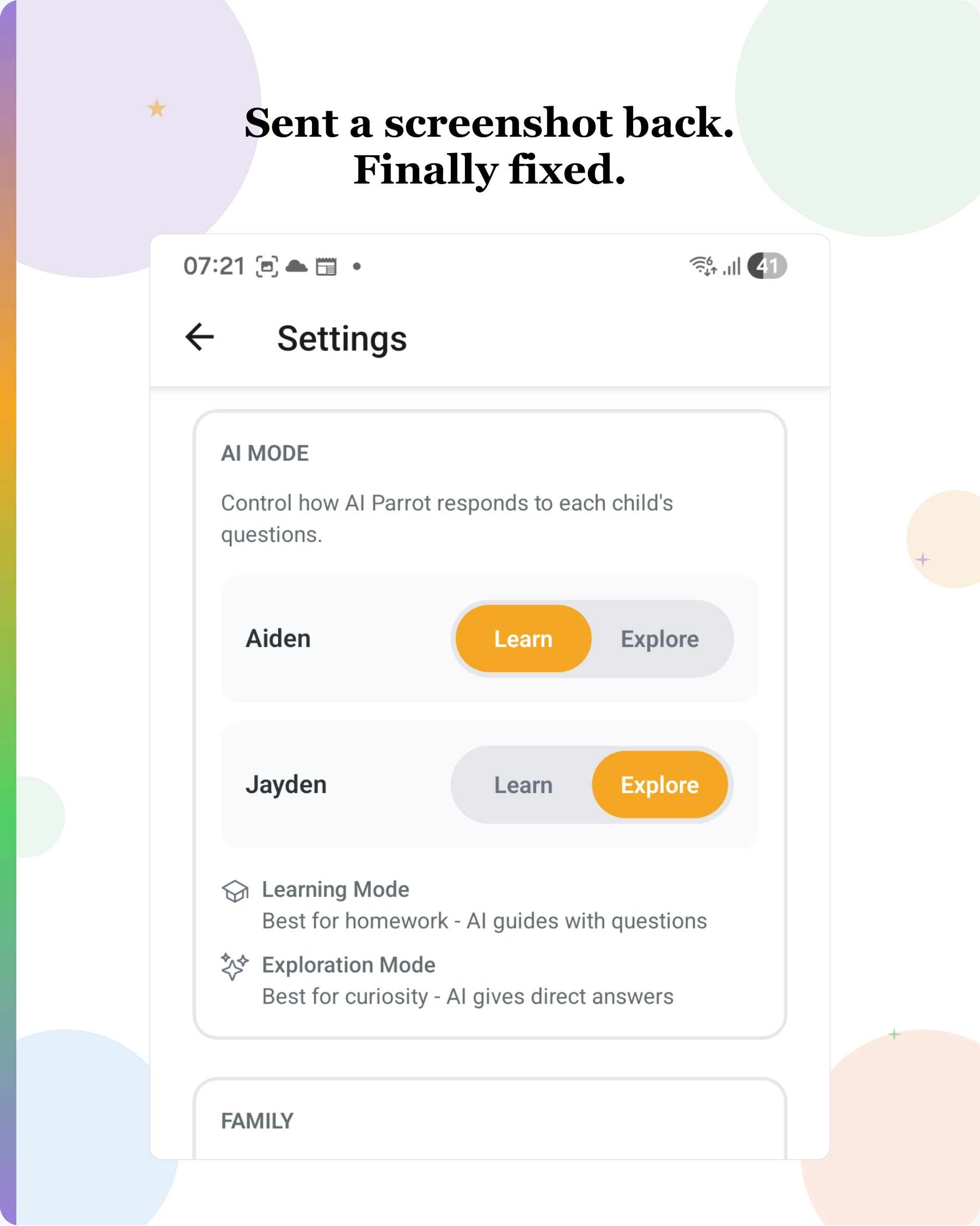

It took additional prompt to finally get it fixed.

—

This invites a question.

If the LLM could see what it coded, could it catch these obvious issues on its own?

This is something I just started to explore. Does anyone know of a solution that lets Claude Code (or similar tools) see its own UI output for a mobile app during the agentic loop?

Would love to hear how others handle this.

#AI #CodingWithAI #ClaudeCode #UITesting #UXDesign #DeveloperExperience #LLM

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in Vibe Coding

How We Generated S$350k Without Ad Spend

The answer? AI + SEO.

OpenAI’s Windsurf deal is off — and Windsurf’s CEO is going to Google

Anyone feel like we should use AI to create a drama and publish it on Netflix?

What is an AI agent?

If you're still confused, you're not alone. There is no universally agreed-upon definition of what an AI agent is.

Create a Free LinkedIn Carousel with Vibe Coding

(See the carousel below that I created for one of my posts)

Claude Code and OpenAI Codex Do Track You

Recently, after hitting my Claude Code Max limit, I switched over to OpenAI Codex to continue my work.

Hitting your AI coding usage limit feels like reaching the climax of a drama series and having to...

You’re full of ideas, but suddenly on hold until next day.

How We Generated S$350k Without Ad Spend

The answer? AI + SEO.

What is an AI agent?

If you're still confused, you're not alone. There is no universally agreed-upon definition of what an AI agent is.

Claude Code and OpenAI Codex Do Track You

Recently, after hitting my Claude Code Max limit, I switched over to OpenAI Codex to continue my work.

OpenAI’s Windsurf deal is off — and Windsurf’s CEO is going to Google

Anyone feel like we should use AI to create a drama and publish it on Netflix?

Create a Free LinkedIn Carousel with Vibe Coding

(See the carousel below that I created for one of my posts)

Hitting your AI coding usage limit feels like reaching the climax of a drama series and having to...

You’re full of ideas, but suddenly on hold until next day.