The Hype Cycle of Claude Code That Everyone Will Go Through

Last week, Boris shared he built Claude Cowork with 100% vibe coding in 10 days. It took the software world by storm.

By now, you would have seen many RIP software engineering posts quoting Claude Cowork. This is a post to debunk the myth.

The Hype and Confusion

Last week, Boris, creator of Claude Code, shared that he built Claude Cowork with 100% vibe coding in 10 days. It took the software world by storm. Many LinkedIn creators quickly jumped to share posts on RIP software engineering, welcome AGI and more.

But, I was confused, for 2 reasons.

First, in a Dec podcast, Boris shared that vibe coding has its place, but it’s far from a universal solution. It works well for “throwaway code and prototypes, code that’s not in the critical path”. (Source: https://www.businessinsider.com/claude-code-creator-vibe-coding-limits-boris-cherny-anthropic-2025-12)

Second, after using Claude Code to build 3 mobile apps, I have gone through the Gartner hype cycle of Claude Code. From Peak of Inflated Expectations to Slope of Enlightenment. I would have disagreed with Boris’s podcast in Dec if I was in the peak.

The Reality

So, what is the reality of Claude Cowork?

Claude Cowork is actually a UI wrapper of Claude Code. So, the reality is Boris vibe coded a UI wrapper in 10 days, on top of a product they have built for a while.

The impressive demo of reorganizing files on Desktop? That is just a call to Claude Code. And Claude Code could do this months ago.

LLM is an average coder with deep knowledge

If you understand the fundamentals of LLMs, you would know that LLM is an averaging technology. Out of the box, it produces average code - think of code written by junior / mid-level engineer that still needs code review.

Its difference from humans is that it has expert knowledge, which can be unlocked with the right prompt.

This is the mental model I use: LLM is a software engineer with deep knowledge, but always codes like junior / mid-level engineer out of the box.

My Grand Plan

With this insight, I thought that by crafting best practice guides, and giving it a good codebase as reference, it would fly.

I started a mission to unlock the full potential of Claude Code, with a project idea that I want to build from scratch. I spent 2 weeks doing pair programming with Claude Code, reviewing its code. Every time Claude Code produced suboptimal code, I would expand the best practices.

When I finished my first mobile app in 3 weeks, I ended up with 7 best practice guides that cover: Coding, database, GraphQL, React Native, security, testing, UX.

The Peak of Inflated Expectation

What I built is not a POC, it is a mobile app with production-grade backend. It follows best practices with architecture components like Lambda, SQS, SNS, CDN and more.

The wild part came next.

Reusing the guides I crafted. I vibe coded the 2nd app in 3 days.

This was when I was at The Peak of Inflated Expectations. I thought from then on, I will be able to launch 1 idea every week!

The Trough of Disillusionment

I built the v1 of the two apps without in-app subscription. It was intentional as coding subscription is non-trivial. There are many edge cases like immediate upgrade mid period, deferred downgrade end of period. There are also different quirks with different providers - Stripe, Google, Apple. There are also race conditions between webhooks and immediate API sync for good UX. Even some senior engineers couldn’t get it right.

It is a true test of LLM coding.

Unfortunately, it failed. The code it produced was so buggy that I needed to create best practices for subscription and webhook.

But what disappointed me wasn’t that it failed on the first try. It failed to follow my best practices for subsequent implementations. It hallucinated and continued making mistakes that ended up costing me 10+ days to finish the implementation.

The #1 Failure

It implemented event-based webhook in the first try. An experienced engineer who has coded webhooks before will know the issue of event-based webhook. Event-based webhook is simple to code, but it depends on flawed assumptions:

Assumption 1: Webhook is the only source that triggers state change.

For great UX in subscription upgrade, we have two sources that trigger subscription state change, immediate purchase verification via API and webhook. Webhooks need to handle race conditions with state changes caused by API call.

Assumption 2: Webhook comes in the right order.

Over the internet, you can’t guarantee your server to receive webhooks in the right order. When this assumption is broken, it causes various bugs that are difficult to solve.

State-based Webhook

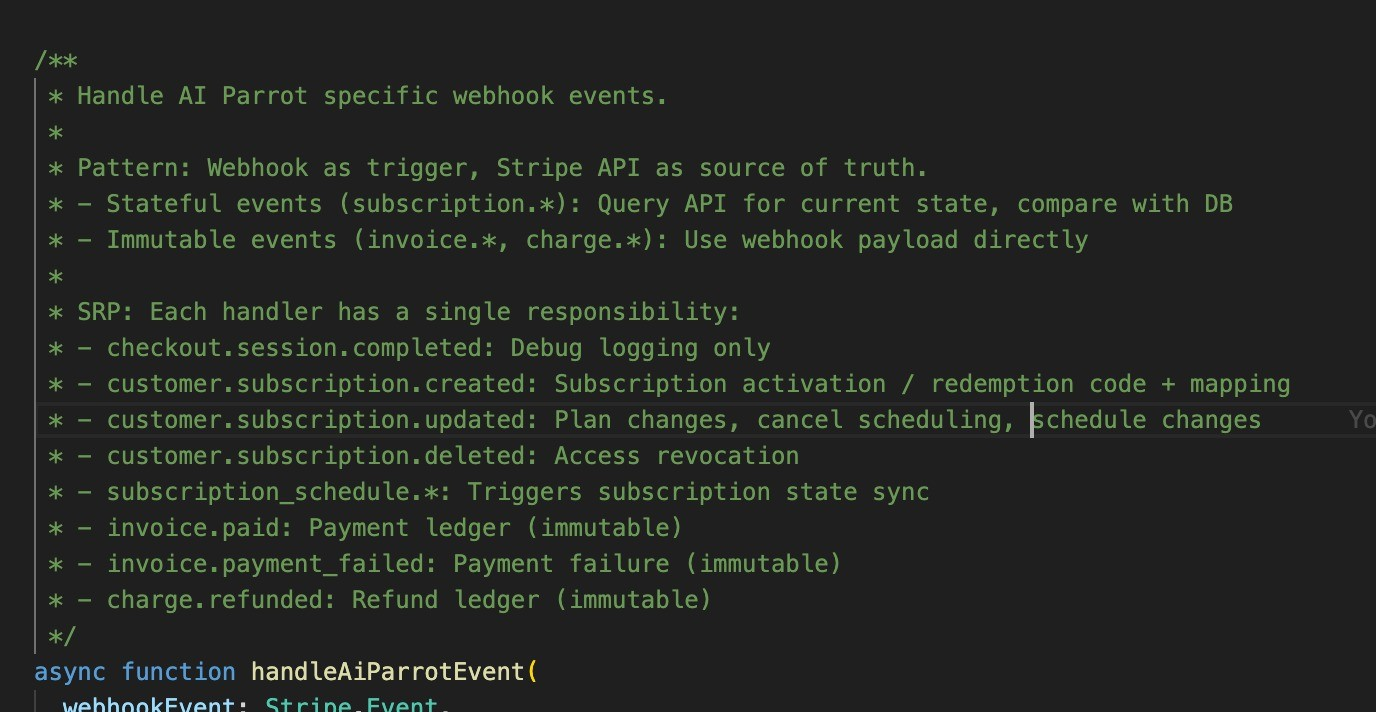

A better architecture would be state-based webhook. Webhook as a trigger, provider API as the source of truth. In this architecture, webhooks only serve to inform your server something changed. Your server ignores the data in the webhook. Instead, it calls the provider API to get the most updated state. Then it updates your server so that it syncs with the provider’s latest state.

For my case, the event-based webhook coded by Claude Code is so buggy that I then asked it to refactor using state-based webhook. It went through rigorous planning. Then worked for 40 mins. And told me it is done.

But the reality? It hallucinated in some parts. The best one was it added comments that the function was refactored to use state-based architecture. But it didn’t change the code at all.

Claude Code added this impressive code comment, without actually changing the function.

The Common Problem

The above is just one of the examples. As I worked more with Claude Code in my 3rd app. I noticed a common pattern. It sometimes claims it has finished the tasks. But in reality, the tasks are not finished. Or it would take shortcuts.

In another example, I asked it to produce seed content for 18 topics. It did the first 4 nicely. And the rest, it wrote the content as “Coming soon”.

Claude Code told me it completed all the 18 topics. But this is what it produced for 14 out of 18 topics.

My Hypothesis, The Verdict and What Can We Do?

Reading various articles shared on the Internet, my hypothesis is this is due to context collapse. The performance of Claude Code in terms of instruction following will start to deteriorate once you reach 50% context.

So, don’t expect it to do a good job when given a big task.

Planning mode is not fully reliable as well. When the task is too big, Claude Code may not fully follow the plan. Or as I shared earlier, it may complete the plan by taking shortcuts.

For now, if you want to get the most out of Claude Code. Do rigorous planning, and break tasks into bite-sized chunks. Then, ask it to do it one-by-one.

It is still good to have best practice guides. It may not fully follow it. What I am doing is repeatedly asking it to audit its code against best practices. That will at least prevent your tech debt from piling up too fast.

What Is The Future of Agentic Coding?

My hopes for autonomous agentic coding are still not lost. But not with the current architecture and workflow. I believe we can reach the next level of autonomous coding with this:

- Multi-agent orchestration - one agent per best practice.

- Workflow to review code with specialized agents continuously.

It is something I will explore in my next project.

So, what is your experience with Claude Code? Any other tips to share?

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in Vibe Coding

We often think of AI agents as digital employees.

When companies treat agents as “digital employees,” they tend to make two critical mistakes. First, they manage agents like individual workers rather than as...

Low-code (or no-code) platforms will replace coding.

That’s the narrative we keep hearing for years.

冬至快乐!Happy Winter Solstice Festival!

I’m curious to see how well Meta AI can create images of Chinese cultural festivals.

How We Generated S$350k Without Ad Spend

The answer? AI + SEO.

How to Use The Theory of Constraints to Find High-ROI AI Opportunities

In this article, I share how we use the Theory of Constraints to find high-ROI AI opportunities.

What is the difference between a proposal that can win $2m vs $20k deal?

$2M Proposal: Focuses on strategy. The "why" we should do this and the high level approach.

We often think of AI agents as digital employees.

When companies treat agents as “digital employees,” they tend to make two critical mistakes. First, they manage agents like individual workers rather than as...

How We Generated S$350k Without Ad Spend

The answer? AI + SEO.

What is the difference between a proposal that can win $2m vs $20k deal?

$2M Proposal: Focuses on strategy. The "why" we should do this and the high level approach.

Low-code (or no-code) platforms will replace coding.

That’s the narrative we keep hearing for years.

冬至快乐!Happy Winter Solstice Festival!

I’m curious to see how well Meta AI can create images of Chinese cultural festivals.

How to Use The Theory of Constraints to Find High-ROI AI Opportunities

In this article, I share how we use the Theory of Constraints to find high-ROI AI opportunities.