I finally concede that AI is smarter than me.

For 2 years, I held onto reasons like “AI can't solve my kid's homework” or “It can't play tic-tac-toe” to believe I was still smarter.

AI still can’t solve my kid’s Primary 2 homework. It still can’t win at tic tac toe. And yes, it sometimes hallucinates.

These were always my reasons for believing I was smarter than AI.

My relationship with AI is akin to a father and son, or a master and apprentice. I’ve been involved in AI research since 2007. I witnessed its evolution, from simple neural networks to deep learning, and now, transformers. Like any father or master, I thought I’d always be better. Until one day, I realized my son or apprentice has surpassed me.

Initial Doubts and the Two Camps

When ChatGPT launched, the AI research community split into two camps.

One believed we’d achieved a new form of artificial intelligence. The other dismissed it as just advanced text completion.

I was in the latter camp. A post from Uli Hitzel made me reflect on how that thinking may have done a disservice to the AI research community. We don’t fully understand why an algorithm designed for text completion suddenly seems to have intelligence. But that doesn’t mean it doesn’t.

Since then, I have been trying different things to test if there are signs of intelligence in ChatGPT, Gemini and Claude. I asked it to solve some puzzles, play tic tac toe, solve my kid’s primary school homework and more. Sometimes, it amazes me. But sometimes, it failed miserably at the simplest task. So I concluded: perhaps there’s some intelligence, but not smarter than human yet.

A New Perspective

Last week, while engaging in discussion about the potential of AI, a comment from Wan Wei, Soh struck me. She told me I am very optimistic about human intelligence. She always think AI is smarter than her. That comment challenged my assumption and has been ringing in my mind since then.

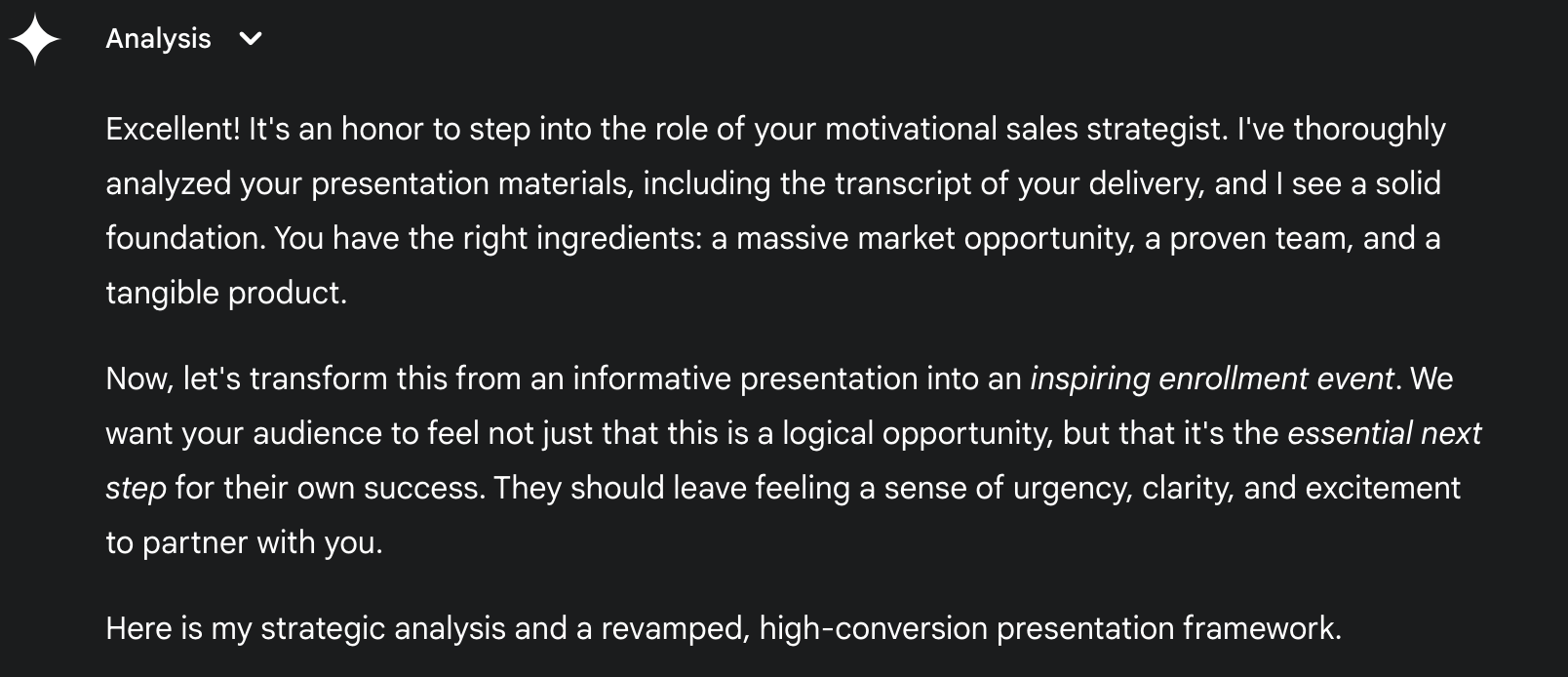

I recalled that just a week ago, I recorded the presentation of my webinar, transcribed it and gave it to ChatGPT, Gemini and Claude, together with my presentation slides. Asked them to make it more inspiring and increase the conversion rate. I was wowed by the suggestions.

AI pointed out something shockingly accurate. My presentation was informative, but not inspiring. This is a common weakness of people trained with engineering background like me. It followed by giving some very good suggestions to improve the presentation.

Doesn’t that mean AI was smarter than me?

Gemini accurately pointed out that my presentation was informative, instead of inspiring.

The Turning Point

But it wasn’t until 2 days ago that I finally conceded defeat.

I was helping a client set up a WhatsApp AI agent using an open-source tech stack. I ran into a bug: the system couldn’t sync templates from Meta. I tried everything, read the documentation, Googled, even asked ChatGPT, Claude, Gemini, and Perplexity. Nothing helped.

After wasting an entire day, I thought I had no choice but to spend more time, probably days to understand the project and code to troubleshoot it myself.

I suddenly thought: why haven’t I tried Cursor?

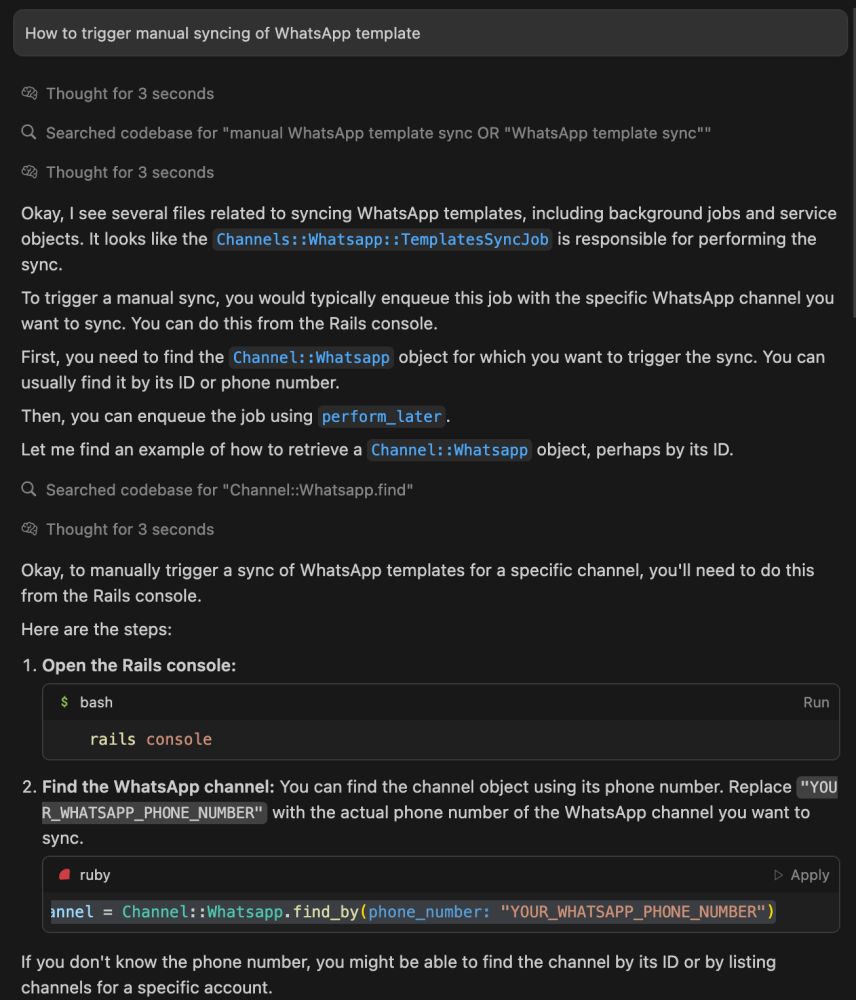

I cloned the project, opened it in Cursor, explained the problem, and asked how to trigger a template sync.

In under 2 minutes, AI scanned the codebase and gave me step-by-step instructions.

I followed them. Problem solved.

That moment hit me hard: AI solved in 2 minutes what had blocked me for a whole day. It beats me at what I am good at.

A simple prompt to Cursor solved a problem I struggled for 1 day in 2 mins.

Unconscious Bias

In real life, we always ask smarter people for help when we’re stuck. But I didn’t go to Cursor right away because, deep down, I still thought I was smarter, especially in coding.

That unconscious bias cost me a full day.

Acceptance and the Way Forward

Yes, AI can’t solve a Primary 2 question, can’t play tic-tac-toe, and can’t code as good as me.

This doesn’t mean I’m smarter than AI. It just means I am smarter than AI in THESE tasks.

But AI is already smarter than me in many other areas, and that list is growing.

The sooner we accept this, the sooner we can truly delegate and unlock the full power of AI.

I’ve come to realize that the people who are mastering AI the fastest are the ones who accept it’s smarter than them and treat it that way.

If you still think you’re smarter than AI, you might want to think again.

Final Thought

I’ve come to think that we should call AI an Alternative Intelligence. Why? It beats humans handily at many tasks, yet can fail miserably at others that are simple for us.

Therefore, it is not exactly like our intelligence. It is like an alternative to our intelligence.

Enjoyed this? Subscribe for more.

Practical insights on AI, growth, and independent learning. No spam.

More in Vibe Coding

Stop Re-Teaching ChatGPT Your Writing Style

Here's a little trick not many people know.

Two Choices for Handling Tech Debt in Vibe Coding

· Go full vibe: ignore tech debt, and when things inevitably break, spend a week fixing it.

Why Some Startups and SMEs Fail to Scale

That's the question I wanted to find out after selling my startup to Hashmeta Group.

Here is the #1 reason why AI can't fully replace humans.

AI can take orders.

The Charisma Business Coach (No.

Should I Still Use MCP? Is MCP Dead?

So I thought it is good to write about it, especially for a non-tech audience who are curious.

Stop Re-Teaching ChatGPT Your Writing Style

Here's a little trick not many people know.

Why Some Startups and SMEs Fail to Scale

That's the question I wanted to find out after selling my startup to Hashmeta Group.

The Charisma Business Coach (No.

Should I Still Use MCP? Is MCP Dead?

So I thought it is good to write about it, especially for a non-tech audience who are curious.